The widespread fascination with artificial intelligence has reached a critical juncture where the initial luster of experimental prototypes is being replaced by a demand for tangible economic results. While the global market has seen astronomical sums of capital poured into generative models and automated systems, the actual implementation within the corporate hierarchy remains surprisingly fragmented. Statistics from the current fiscal landscape indicate that nearly ninety-five percent of organizations struggle to demonstrate a definitive return on investment from their specialized AI initiatives. This discrepancy does not stem from a lack of technical capability but rather from a fundamental misalignment between the novelty of the technology and the rigid requirements of industrial-scale operations. As the era of unbridled experimentation transitions into a period of strict accountability, the focus has shifted toward bridge-building—specifically, connecting the raw potential of machine learning with the practical mechanics of everyday business.

Moving Beyond the Pilot Paradox: The Reality of Modern AI Integration

The current corporate landscape is defined by a massive disconnect between heavy capital investment and measurable returns on Generative AI. For several years, boards of directors have authorized substantial budgets under the assumption that AI would naturally translate into immediate efficiency gains. However, many of these projects have remained trapped in a perpetual state of “pilot purgatory,” where they demonstrate promise in controlled environments but fail to withstand the pressures of live production. The challenge lies in the fact that while a prototype might perform a single task with impressive speed, scaling that task across a global enterprise requires a level of reliability and integration that many early-stage models simply cannot provide.

Transitioning from experimental “cool” projects to robust, value-driven infrastructure is the defining challenge for today’s Chief Information Officers. These leaders are no longer tasked with merely proving that AI works; they must prove that AI is worth the cost of its own existence. This shift requires a departure from the “move fast and break things” mentality toward a more disciplined, engineering-centric approach. Modern integration requires a deep understanding of legacy systems, a commitment to rigorous security protocols, and an ability to forecast the long-term maintenance costs of complex algorithmic stacks. It is a transition from treating AI as a shiny peripheral tool to embedding it as a central, load-bearing pillar of the corporate architecture.

This exploration details how industry leaders are bridging the 95% ROI gap by focusing on operational discipline rather than technological hype. By examining the methodologies of successful practitioners in sectors such as logistics, finance, and healthcare, a clear pattern emerges. These organizations do not treat AI as a standalone miracle; instead, they treat it as an extension of their existing digital transformation efforts. They prioritize the boring but essential work of data cleaning, workflow mapping, and user training. By moving away from the pursuit of the “next big thing” and focusing on the sustainable deployment of “the right thing,” these enterprises are finally starting to see the needle move on productivity and profitability.

Architecting the Foundation for Industrial-Grade AI Deployment

Prioritizing High-Friction Pain Points Over Technological Novelty

Scaling begins by identifying “workable” use cases that address tangible business bottlenecks rather than showcasing complex algorithms. There is often a temptation to deploy AI for high-visibility projects that garner headlines but offer little in the way of day-to-day operational relief. In contrast, the most successful implementations are those that target the “unsexy” problems—the manual data entry, the fragmented communication loops, and the repetitive research tasks that frustrate employees and slow down service delivery. By focusing on these high-friction areas, an enterprise can secure early wins that build the internal momentum necessary for more ambitious projects.

Insights from the logistics and finance sectors demonstrate that AI provides the most value when it consolidates fragmented workflows or automates labor-intensive regulatory research. For example, in the banking sector, the process of performing due diligence for Anti-Money Laundering (AML) compliance historically required hours of manual searching through disparate databases. When AI is applied specifically to this research bottleneck, it can condense an entire day of investigation into a few minutes of automated synthesis. This is not just a technological upgrade; it is a fundamental shift in how human capital is utilized, allowing high-level analysts to focus on decision-making rather than data gathering.

Organizations must resist the urge to deploy AI for minor tasks, focusing instead on deep-seated operational complexities that offer significant time-saving potential. A common mistake is the deployment of chatbots for simple internal queries, such as checking vacation balances, which provides minimal value to the bottom line. True scaling occurs when AI is integrated into the core “engine” of the business. Whether it is optimizing the routing of thousands of transport vehicles or managing the triage of patient calls in a busy health center, the focus must remain on areas where the complexity is too great for manual oversight but perfectly suited for machine-assisted processing.

Adopting an Incremental “Layer Cake” Strategy for Sustainable Growth

Successful scaling is rarely a “big bang” event; it requires a tiered approach that prioritizes data preparation, security, and governance before full-scale rollout. This “layer cake” strategy ensures that each new capability is built upon a stable and secure foundation. The bottom layer consists of high-quality, accessible data that has been cleaned and organized. Without this, any AI model—no matter how sophisticated—will eventually produce unreliable or biased results. Only after the data and security layers are solidified can the organization begin to add the interactive and generative features that the end-user sees.

Real-world evidence from healthcare call centers suggests that time-bound pilots—starting with after-hours operations—mitigate risk and allow for real-time accuracy tuning. By launching an AI-driven voice operator during times when human staff are unavailable, the organization can collect valuable data on system performance without risking a total service failure during peak hours. This incremental expansion allows for the identification of language nuances, technical glitches, and user frustrations in a controlled environment. As the system proves its reliability, it can be gradually introduced into busier shifts, eventually becoming a 24/7 component of the patient experience.

Rapid, wide-scale implementations often collapse under the weight of unforeseen technical debt, making a two-year horizon a more realistic timeframe for enterprise-wide adoption. The pressure to “keep up with the competition” frequently leads to rushed deployments that overlook the long-term costs of maintenance and model drift. A patient, phased approach allows an organization to build internal expertise and adjust its strategy based on actual usage patterns. By viewing AI adoption as a multi-year journey rather than a single quarterly objective, leaders can ensure that the technology matures alongside the organizational culture, leading to a much more resilient and effective final product.

Engineering Resilience Through Rigorous Performance Metrics and Feedback Loops

To sustain executive support, AI initiatives must move beyond qualitative “wow factors” to quantitative dashboards tracking safety, utilization, and accuracy. The initial excitement of seeing a machine generate text or images quickly fades if the technology does not contribute to the organization’s Key Performance Indicators (KPIs). Enterprises that succeed in scaling AI are those that establish clear, data-driven benchmarks from the outset. This involves monitoring not just whether the AI is functioning, but how often it is being used, how many errors it is making, and how much time it is truly saving the human workforce.

Emerging trends show that industry-specific KPIs, such as “abandonment rates” in healthcare or “safety infractions” in logistics, provide the necessary proof of concept for further investment. In the world of student transportation, for instance, AI-enabled cameras can track specific behaviors like seatbelt usage or distracted driving. By correlating these AI-derived data points with a reduction in actual road incidents, the organization can provide irrefutable evidence of the technology’s value. Similarly, in a healthcare setting, if an AI triage system reduces the number of patients who hang up out of frustration, the resulting improvement in patient retention and care quality becomes a powerful argument for continued expansion.

Challenging the assumption that AI is a “set and forget” technology, leaders emphasize the need for constant system tuning based on granular end-user interaction data. The landscape of AI is inherently dynamic; models can degrade over time, and user needs can shift. Maintaining a successful deployment requires a dedicated feedback loop where employees can report inaccuracies and the technical team can refine the underlying algorithms. This iterative process of monitoring and adjustment ensures that the AI remains relevant and accurate. It also fosters a sense of transparency and accountability, as the system is seen to be evolving in response to real-world challenges rather than operating in a black box.

Cultivating Strategic Vendor Synergy and the “Fail Fast” Mindset

Scaling effectively necessitates external partnerships that offer more than just software; vendors must act as transparent collaborators aligned with the organization’s long-term roadmap. In the current market, there is an abundance of vendors offering generic AI solutions that promise to solve every problem. However, true enterprise scaling requires partners who understand the specific regulatory, cultural, and technical constraints of a particular industry. A successful vendor relationship is built on shared goals and a willingness to engage in rigorous testing and validation before long-term contracts are signed.

Comparative analysis reveals that the most resilient enterprises are those willing to abandon underperforming projects early to avoid the trap of the sunk cost fallacy. Not every AI initiative will be a success, and the ability to recognize failure early is a critical component of a healthy innovation strategy. By setting clear “exit criteria” at the beginning of a pilot, organizations can prevent themselves from throwing good money after bad. This “fail fast” mentality ensures that resources are always directed toward the projects with the highest potential for impact, rather than being tied up in legacy experiments that have failed to deliver on their initial promises.

By engaging in peer consultation and rigorous vendor vetting, CIOs can avoid common industry pitfalls and ensure that their AI stack remains both flexible and secure. Learning from the experiences of others is one of the most effective ways to navigate the complexities of AI scaling. Many leaders find that participating in industry forums or informal networks allows them to discover which vendors are reliable and which strategies have yielded the best results in similar environments. This collective intelligence helps to de-risk the scaling process, allowing the organization to build on the successes of others while avoiding the “battle bruises” associated with unproven technologies.

Navigating the Human and Structural Barriers to AI Expansion

Success depends on bridging the governance gap and ensuring that a “human in the loop” remains a central component of any automated system. As AI systems take on more complex tasks, the risks associated with errors or hallucinations increase exponentially. Establishing a robust governance framework ensures that there are clear lines of accountability and that every automated decision is subject to human oversight. This is particularly important in regulated industries where transparency is a legal requirement. By keeping humans at the center of the process, organizations can leverage the speed of AI while maintaining the judgment and ethics of a professional workforce.

Actionable strategies include prioritizing “white-glove” change management to address employee anxiety and conducting on-site testing to ensure tools work in real-world environmental conditions. The introduction of AI often triggers significant fear regarding job displacement and the loss of agency. Effective leaders address these concerns directly through transparent communication and inclusive training programs. Furthermore, the technical team must understand the physical environment in which the tools will be used. A digital solution that looks great in a climate-controlled office may fail completely if it is intended for use by a field technician working in the dark or in extreme weather. On-site testing ensures that the technology actually empowers the worker rather than becoming a burden.

Organizations must treat data hygiene as a non-negotiable prerequisite, as scaling on a weak data foundation inevitably leads to systemic failure. The “garbage in, garbage out” principle has never been more relevant than it is in the context of artificial intelligence. If the underlying data is biased, incomplete, or inaccurately labeled, the resulting AI outputs will reflect those flaws, potentially at a massive scale. Before any expansion can occur, there must be a concerted effort to standardize data formats, eliminate silos, and ensure the ongoing integrity of the information being fed into the system. High-quality data is the fuel that drives the AI engine, and without it, even the most advanced systems will eventually stall.

From Experimental Curiosity to Consequential Infrastructure

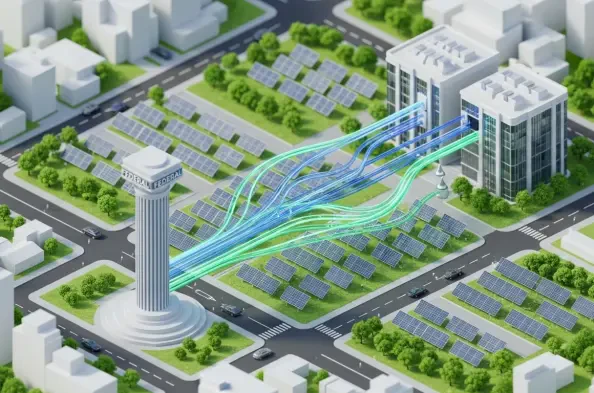

The evolution of enterprise AI is a shift from treating the technology as a novelty to integrating it as a core, reliable component of corporate infrastructure. For a long time, AI was viewed as an exotic addition to the tech stack—something to be experimented with but not relied upon for mission-critical operations. That perspective has changed as the technology has matured. Today, the goal is for AI to become as ubiquitous and invisible as the internet or the electrical grid. It should be a quiet, efficient force that powers the business, rather than a loud and flashy distraction. This transition requires a fundamental shift in mindset from the boardroom to the front line, where the focus is on stability and long-term utility.

Ongoing success requires a balance of technological precision, industrial empathy, and the strategic patience to endure the “battle bruises” of innovation. Scaling AI is a difficult and often messy process that involves a great deal of trial and error. It requires leaders who are technically savvy enough to manage the complexities of the software, but also empathetic enough to understand how those tools affect the lives of their employees and customers. There will inevitably be setbacks, technical glitches, and moments of doubt. The organizations that ultimately thrive will be those that possess the resilience to stay the course, learning from every mistake and using those lessons to build a stronger, more efficient enterprise.

Organizations that focus on measurable ROI and human-centric design will be the ones to finally bridge the gap between AI potential and operational reality. The path toward successful scaling is paved with discipline, data, and a deep respect for the human element of business. By moving away from the hype and focusing on the practical application of technology to solve real problems, enterprises can unlock the true transformative power of artificial intelligence. The future of corporate AI is not found in the most complex algorithms, but in the most effective integrations—those that make the business faster, safer, and more responsive to the needs of the world. The journey toward this future was defined by a transition from the excitement of what could be to the rigorous implementation of what actually works.