The silent hum of a thousand interconnected GPUs in a modern data center represents more than just raw processing power; it is the heartbeat of a global economy that increasingly relies on the integrity of artificial intelligence. As organizations transition from traditional compute hubs to specialized facilities designed specifically for AI workloads, the focus has shifted toward securing the massive computational power and intellectual property housed within these environments. This review explores the evolution of this technology, its key features, performance metrics, and the profound impact it has on modern applications. The purpose of this analysis is to provide a thorough understanding of current capabilities and the trajectory of future developments in a landscape where security is no longer an optional layer but a foundational requirement.

The technological shift toward artificial intelligence has redefined the data center from a passive storage facility into an active, high-velocity engine of creation. Unlike legacy environments that primarily handled transactional data, the AI data center manages complex neural networks and massive datasets that require constant, high-speed movement across internal fabrics. This shift has rendered traditional security methods obsolete, as the sheer speed of AI processing can easily outpace legacy monitoring tools. Consequently, a new paradigm of protection has emerged, focusing on the specific vulnerabilities of accelerated computing and the unique value of the digital assets being created.

The Evolution of AI Data Center Architecture

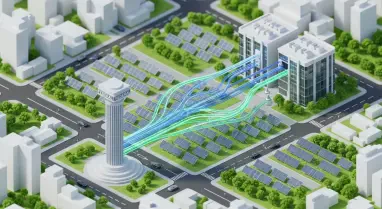

The journey toward modern AI security began with a fundamental reassessment of how data flows within a facility. In the legacy “castle-and-moat” paradigm, security was almost entirely perimeter-based, relying on firewalls and entry points to keep threats out while leaving the internal network relatively open. However, as AI workloads began to require massive “East-West” traffic between thousands of processor nodes, this model failed. Modern environments have moved away from static perimeters toward a more fluid, hardware-accelerated security posture that treats every single packet and process as a potential threat, regardless of its location within the network.

This transition has been driven by the realization that AI security is now synonymous with national and corporate economic stability. When a data center is used to train a sovereign large language model or a proprietary trading algorithm, the weights of that model represent billions of dollars in research and development. Therefore, the modern architecture has evolved to prioritize the protection of these “crown jewels” at every stage of the lifecycle. This evolution reflects a broader technological trend where the physical infrastructure and the logical software layers are becoming inseparable, creating a holistic environment where security is baked into the silicon itself.

Modern facilities are characterized by their ability to handle dense power requirements and extreme heat, but their most significant evolution is in their logical design. The integration of high-bandwidth memory and specialized interconnects has allowed for a more granular approach to monitoring. Instead of checking data at a single gateway, modern security protocols are distributed across the entire fabric. This allows for real-time inspection of data as it moves between GPUs, ensuring that even the most subtle anomalies can be detected before they escalate into a full-scale breach or data exfiltration event.

Core Pillars of Modern AI Security Infrastructure

Zero Trust Network Architecture: Identity-Centric Defense

The principle of “never trust, always verify” forms the backbone of the modern AI data center. In this environment, the concept of a “trusted internal network” has been completely eliminated to prevent lateral movement by sophisticated actors. Every request, whether it comes from a human administrator or an automated microservice, must be authenticated and authorized. This is particularly critical in AI environments where automated services frequently spin up thousands of ephemeral containers to handle specific training tasks, each of which must be strictly isolated from other workloads to prevent cross-contamination or unauthorized access.

Identity in these centers has evolved beyond simple usernames and passwords to encompass cryptographically verifiable identities for non-human workloads. Each AI service is assigned a unique, machine-level identity that allows the system to track its behavior with extreme precision. If an automated service that typically accesses training data suddenly attempts to communicate with a model’s weights or modify system firmware, the Zero Trust architecture can instantly revoke its access. This level of granularity ensures that even if one component is compromised, the rest of the ecosystem remains shielded, effectively compartmentalizing the risk.

Silicon-Level Security and Hardware Root-of-Trust

Securing an AI data center now requires going deep into the physical hardware, specifically the GPUs and TPUs that drive the computation. Silicon-level security involves establishing a hardware “root-of-trust,” which is a permanent, immutable security foundation built directly into the processor. This foundation ensures that only verified, signed firmware can be loaded during the boot process. By preventing unauthorized code from running at the most basic hardware level, organizations can protect against “bootkits” and other persistent threats that could otherwise hide from higher-level operating system security tools.

The performance characteristics of these secure boot protocols are designed to be nearly invisible, ensuring that the rigorous verification process does not slow down the rapid scaling required for AI training. Moreover, hardware-level encryption is now used to protect memory, preventing a common attack vector known as memory extraction. During model training, massive amounts of proprietary data are held in the GPU’s high-bandwidth memory; silicon-level security ensures that this data is encrypted even while it is being processed. This prevents malicious actors from physically tapping into the hardware or using software exploits to scrape the memory for model weights or sensitive training samples.

Software Supply Chain Integrity and Data Lineage

The integrity of the AI data center is also heavily dependent on the software supply chain, particularly the open-source frameworks like PyTorch and TensorFlow that form the basis of most AI development. Software Composition Analysis (SCA) has become a vital tool for identifying vulnerabilities within these complex libraries and their numerous dependencies. By continuously scanning for known security flaws and ensuring that all third-party code is coming from trusted sources, organizations can prevent “supply chain attacks” that attempt to inject malicious code into the very tools used to build AI models.

Beyond the code, the integrity of the “data oceans” used for training is of paramount importance. Adversarial poisoning attacks, where malicious data is subtly introduced to bias a model’s output, represent a significant threat to the reliability of AI. To counter this, modern security involves rigorous data lineage tracking and integrity checksums. Every piece of information that enters the training pipeline is tagged and tracked from its origin to the final model. This allows researchers to verify the purity of their datasets and provides a clear audit trail that can be used to identify and remove any suspicious or corrupted data before it can influence the learning process.

Emerging Trends in Defensive AI and Model Protection

A fascinating development in the field is the rise of “Defensive AI,” where machine learning models are deployed specifically to monitor and protect other AI workloads. These defensive systems analyze patterns of behavior across the data center, looking for signs of GPU memory scraping, unusual data exfiltration, or unauthorized model queries. Because they operate at the same speed as the workloads they protect, these systems can respond to threats in milliseconds, providing a level of protection that human analysts simply cannot match. This creates a recursive security loop where the technology used to create value is also the primary tool used to defend it.

Innovation in this space also includes digital watermarking for model weights. This technique embeds a unique, invisible signal into the neural network’s parameters, allowing an organization to prove ownership of a model if it is ever stolen and used by another party. Furthermore, real-time anomaly detection for GPU memory access is becoming more common, allowing the system to detect if a process is attempting to access memory regions it should not be touching. This shift in industry behavior reflects the reality that AI models have become the primary “crown jewels” of corporate intellectual property, necessitating a level of protection previously reserved for the most sensitive government secrets.

The market has also seen a significant shift toward proactive threat hunting within the AI pipeline. Instead of waiting for an alarm to go off, defensive systems now use generative models to simulate potential attacks, testing the resilience of the infrastructure against hypothetical zero-day exploits. This “red teaming” at scale allows security teams to identify and patch vulnerabilities before they can be exploited by real-world adversaries. As models become more complex and their internal workings harder to interpret, these automated defensive layers provide a necessary safeguard against both external attacks and internal configuration errors.

Real-World Applications and Industrial Deployment

The practical implementation of secure AI compute is most visible in sectors where data sensitivity is paramount, such as finance and healthcare. In the financial industry, high-performance computing clusters are used to train fraud detection models that process millions of transactions per second. Security in these centers ensures that the underlying financial data remains private and that the models themselves are not manipulated by sophisticated attackers. Similarly, in healthcare, secure AI infrastructure allows researchers to train diagnostic models on massive sets of patient records while strictly adhering to privacy regulations like HIPAA, ensuring that sensitive medical data never leaves a protected environment.

Defense and national security applications also rely heavily on these fortified environments. Secure LLM training clusters are used to analyze vast amounts of intelligence data, requiring a level of physical and logical isolation that is significantly higher than a standard commercial data center. An interesting use case in these environments is the protection of the building management systems (BMS) that control the power and cooling for the AI hardware. Since an attack on the cooling system could physically damage millions of dollars in equipment, the convergence of IT and Operational Technology (OT) security has become a critical focus for modern industrial deployments.

Moreover, the rise of sovereign AI initiatives—where nations build their own large-scale compute facilities—has accelerated the adoption of these security standards. These projects often involve multi-tenant environments where different government agencies or private companies share a massive hardware pool. In such scenarios, robust isolation through virtualization and hardware-based partitioning is essential. The success of these deployments has demonstrated that it is possible to maintain extreme performance and high security simultaneously, provided that the security architecture is integrated into the facility’s design from the very beginning.

Challenges to Widespread Adoption and Technical Hurdles

Despite the clear benefits, implementing high-level security in an AI data center is fraught with technical hurdles, the most significant being the “performance tax.” Security protocols, especially those involving encryption and deep packet inspection, can introduce latency into a system where every microsecond counts. Finding the balance between extreme performance and robust protection is a constant struggle for engineers. If security measures slow down the training of a model by even a few percentage points, the resulting cost in time and electricity can be measured in millions of dollars, making optimization a critical part of the security deployment process.

Regulatory issues and compliance bottlenecks also present a significant challenge. As governments around the world introduce new laws governing AI safety and data privacy, data center operators must navigate a complex web of requirements that vary by region. This often slows down the deployment of agile infrastructure, as every new security feature must be vetted for compliance. Additionally, there is a severe shortage of specialized cybersecurity talent. Managing a modern AI data center requires expertise in silicon-level security, high-performance networking, and machine learning—a combination of skills that is currently rare in the workforce, leading to a bottleneck in the scaling of these fortified facilities.

The physical constraints of power and cooling also play an unexpected role in security. Modern AI hardware is so power-hungry that any disruption in the supply chain for electrical components or specialized cooling fluids can become a security vulnerability. Furthermore, the sheer physical size and complexity of these centers make them difficult to monitor comprehensively. As facilities grow to house tens of thousands of GPUs, the sheer volume of logs and telemetry data can overwhelm traditional monitoring systems, requiring yet more AI-driven tools just to manage the security data itself.

Future Outlook: Autonomous Resilience and Confidential Computing

The future of the AI data center is moving toward a state of “resilience by design,” where the infrastructure is capable of self-healing and autonomous defense. One of the most promising developments in this area is Universal Confidential Computing, which utilizes Trusted Execution Environments (TEEs) to protect data in use. Unlike traditional encryption that protects data while it is stored or moving, TEEs ensure that the data is encrypted even while the processor is working on it. This represents a breakthrough in security, as it prevents even the administrators of the data center or the cloud provider from accessing the sensitive information being processed.

We are also likely to see the emergence of fully autonomous AI-on-AI defense systems. These platforms will be capable of self-configuring in response to new threats, automatically isolating compromised hardware and rerouting traffic to maintain operational continuity without any human intervention. This level of autonomy will be necessary as the speed of cyberattacks continues to increase, eventually reaching a point where human response times are simply too slow. The integration of these systems will mark the final step in the transformation of the data center into a truly fortified fortress of intelligence.

Furthermore, as the industry moves toward more decentralized and edge-based AI, the security protocols developed for the large-scale data center will need to be adapted for smaller, more vulnerable environments. This “security-at-the-edge” will rely on the same principles of silicon-level trust and identity-centric defense but will need to operate with much lower power and compute overhead. The innovations currently being perfected in the massive compute hubs of today will serve as the blueprints for a global network of secure, intelligent devices, ensuring that the engine of AI can run safely across every corner of the digital world.

Assessment of the Modern AI Security Landscape

The evolution of the AI data center has demonstrated that computational performance and robust protection were deeply interdependent. The industry recognized that the massive investments required for modern artificial intelligence could only be justified if the underlying assets—the data and the models—were protected by a security architecture as sophisticated as the AI itself. Organizations prioritized the integration of silicon-level trust and Zero Trust principles, effectively moving away from the outdated perimeter models of the past. This shift ensured that the data center functioned not just as a hub for calculation, but as a fortified sanctuary for intellectual property.

The transition toward autonomous resilience and confidential computing provided a necessary framework for handling the increasingly sensitive nature of AI workloads. By embedding security into the physical hardware and the logical flow of information, the industry managed to mitigate many of the risks associated with high-speed, high-volume data processing. The development of defensive AI systems was particularly instrumental in this regard, as it allowed security teams to keep pace with the rapid evolution of digital threats. These advancements collectively reinforced the idea that in the modern era, a facility could only be considered truly modernized if it was built on a foundation of total security.

In the end, the long-term impact on the industry was the realization that security was the fundamental architecture allowing the engine of intelligence to run safely. The focus moved beyond simple defense and toward the creation of a resilient ecosystem capable of sustaining innovation in the face of constant adversity. As AI continues to integrate into every facet of society, the lessons learned in the construction and operation of these secure data centers will remain vital. The verdict on the current state of the technology was clear: the most successful implementations were those that treated security as a primary driver of performance, rather than a secondary constraint on growth.