Vernon Yai is a preeminent authority in data protection, known for his deep expertise in privacy governance and the mechanics of risk management. With a career dedicated to safeguarding sensitive information, he has become a leading voice in the development of innovative detection and prevention techniques tailored for the modern enterprise. As organizations grapple with the rapid integration of artificial intelligence, his insights offer a critical roadmap for balancing the drive for productivity with the necessity of robust security.

The following discussion explores the shifting landscape of enterprise AI, where transactions have nearly doubled and thousands of applications are now in play. We examine the risks inherent in high-productivity tools, the limitations of traditional blocking strategies, and the unique challenges faced by highly regulated industries like finance and manufacturing. The conversation also delves into the “hidden” AI footprint within SaaS platforms and the tactical shifts required to counter adversaries who are increasingly using generative AI to sharpen their attacks.

Enterprise AI transactions have nearly doubled in the last year, with thousands of different applications now generating traffic. How does this sprawl change the way security teams prioritize governance, and what specific steps can they take to manage risks across such a massive, distributed ecosystem of tools?

The sheer scale of this growth is staggering, with enterprise AI/ML transactions increasing by 83% in just one year. We are no longer looking at a handful of sanctioned tools; our analysis shows over 3,400 different applications generating traffic, which is nearly four times what we saw previously. This sprawl means security teams can no longer focus on a “per-app” basis and must instead shift to a distributed governance model that treats AI as a persistent operating layer. To manage this, organizations need to implement AI asset management to gain full visibility into dependencies across models and pipelines. By establishing a centralized view of these thousands of touchpoints, teams can move away from reactive troubleshooting and toward a proactive stance that secures the entire ecosystem.

High-productivity tools like Grammarly, ChatGPT, and Microsoft Copilot handle massive volumes of sensitive information as data transfers to AI platforms continue to surge. What are the primary data-loss risks associated with these specific tools, and how can organizations inspect prompts and responses without disrupting employee workflows?

The risk is directly proportional to the utility of these tools; because they are so effective at writing, coding, and translating, they naturally attract the highest volumes of sensitive data. In 2025, we saw data transfers to AI platforms surge by 93%, reaching tens of thousands of terabytes, with tools like Codeium and ChatGPT at the center of this movement. The danger lies in proprietary code or sensitive corporate strategy being sent to external models where it could be stored or used for further training. To mitigate this without slowing down the business, organizations should employ inline inspection of prompts and responses. This allows the security layer to block the transmission of specific sensitive data points in real-time, ensuring that the employee’s workflow remains fluid while the organization’s intellectual property stays protected.

Many organizations still block roughly 40% of AI access attempts, yet users often pivot to unsanctioned personal accounts or embedded features. Why is total blocking becoming an unsustainable strategy, and what specific metrics should leaders track to move toward a “safe enablement” model?

While blocking nearly 39% of AI access attempts might feel like a safe bet, it often creates a “shadow AI” problem where employees simply find workarounds using personal accounts or unmonitored SaaS features. This creates a massive blind spot because the work is still happening, but it is now completely outside the view of corporate security controls. Total blocking is unsustainable because it pits security against productivity, eventually leading to a breakdown in governance. Instead, leaders should track metrics such as the volume of sanctioned versus unsanctioned AI transactions and the frequency of “near-miss” data exposures. Moving toward safe enablement involves setting granular access controls and using red-team testing results to prove that internal guardrails are actually working, rather than just keeping the gates closed.

The finance and manufacturing sectors currently lead AI adoption, often intersecting with highly regulated data and operational technology. How should security protocols differ for these high-stakes environments, and what are the consequences of failing to align AI controls with industry-specific compliance requirements?

Finance and manufacturing are at the forefront, accounting for 23.3% and 19.5% of AI activity respectively, which means their risk profiles are uniquely tied to highly sensitive financial data and critical infrastructure. In these sectors, a generic security policy is insufficient; protocols must be deeply integrated with operational technology (OT) and strict regulatory frameworks. If a manufacturing firm fails to align its AI controls, a single poisoned data set could disrupt an entire production line or compromise supply chain integrity. In finance, the consequences of non-compliance or a data leak are not just financial penalties but a total loss of consumer trust. Security teams in these industries must prioritize runtime protections and vulnerability detection that specifically account for the intersection of AI outputs and regulated data streams.

Many SaaS platforms now include “hidden” AI features that are active by default and run background processes without being labeled. What strategies do you recommend for discovering this silent footprint, and how can an AI Bill of Materials (AI-BOM) help teams identify these invisible data pathways?

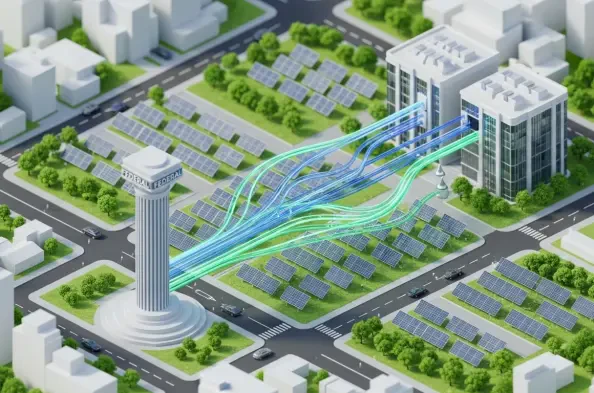

This “hidden” growth is one of the most significant risks we identified because these background processes often create new data pathways that security teams aren’t even monitoring. To uncover this silent footprint, I recommend a strategy centered on continuous monitoring of all SaaS traffic to identify non-standard data calls that suggest AI interaction. An AI Bill of Materials, or AI-BOM, is an essential tool in this process as it provides a comprehensive inventory of the AI components and models embedded within your software supply chain. By requiring and analyzing AI-BOMs, teams can see exactly which applications are using background AI, allowing them to classify and govern these “invisible” pathways just as they would a standalone ChatGPT account. It turns a dark corner of the network into a visible, manageable part of the enterprise architecture.

Adversaries are now using generative AI to accelerate social engineering, create fake personas, and assist in malware development. How do these AI-enhanced attacks bypass traditional perimeter defenses, and what tactical shifts must defenders make to identify malicious activity that mimics legitimate AI usage?

AI-enhanced attacks are particularly dangerous because they allow adversaries to move at a speed and scale that traditional, static perimeter defenses weren’t built to handle. By using AI to generate flawless social engineering emails or highly realistic fake personas, attackers can bypass the “human” element of security, making their intrusions look like legitimate user behavior. To counter this, defenders must shift from looking for known “bad” signatures to analyzing behavioral patterns and anomalies. We need to implement systems that can detect the subtle signs of AI-assisted code generation or unusual data access patterns that deviate from a user’s normal routine. This means security must become as automated and intelligent as the threats it is trying to stop, focusing on the intent and context of the activity rather than just the entry point.

As organizations build their own AI pipelines, every tested system has shown the potential to fail under realistic adversarial pressure. What are the step-by-step best practices for hardening internal models against prompt injection or data poisoning, and how often should teams conduct red-team testing?

Our testing showed that every single enterprise AI system failed at least once when subjected to rigorous pressure, which underscores the need for a “secure-by-design” approach. The first step is to implement robust input validation and sanitization to prevent prompt injection from reaching the model’s core logic. Second, organizations must secure their data training pipelines to ensure that “data poisoning” does not occur during the model’s development phase. Third, runtime protections should be in place to monitor the model’s outputs for unsafe or anomalous information. Given how quickly AI threats evolve, I recommend that red-team testing be conducted at least quarterly, or whenever significant changes are made to the model or its data sources, to ensure that defenses remain effective against the latest adversarial tactics.

What is your forecast for AI security in 2026?

By 2026, I expect the focus to shift from simply managing AI tools to securing autonomous AI agents that operate with high levels of independence across enterprise systems. We will see a massive push toward “Zero Trust for AI,” where every interaction between an agent, a model, and a data set is verified in real-time. The “blocking” era will be largely over, replaced by sophisticated, real-time policy engines that can distinguish between productive AI usage and malicious exploitation in milliseconds. Ultimately, the organizations that thrive will be those that view AI security not as a hurdle, but as a core competency that allows them to innovate faster than their competitors while maintaining total control over their digital assets.