The silent migration of artificial intelligence from a peripheral experimental tool to a foundational architectural layer has fundamentally altered the structural integrity of corporate workflows, yet many leadership teams remain unaware of how deep these roots have grown. While boards of directors often find themselves preoccupied with the theoretical risks of model bias or vendor compliance, a more immediate and systemic shift is occurring beneath the surface where employees are independently redesigning their daily responsibilities. This creates a dangerous disconnect between the governed perception of technology and its operational reality, leaving organizations vulnerable to a unique form of institutional blindness.

The purpose of this exploration is to dismantle the traditional, often static, approach to technology oversight and replace it with a dynamic framework that prioritizes operational intelligence. By examining the evolution of knowledge work and the emergence of clandestine AI adoption, this guide provides a roadmap for executives and information officers to reclaim visibility and control. Readers can expect to learn how to identify hidden risks in automated workflows, manage the surge in organizational capacity, and ensure that human judgment remains the primary anchor in an increasingly automated environment.

Key Questions in Modern AI Oversight

How has the Role of Artificial Intelligence Evolved From a Tool to an Operating Layer?

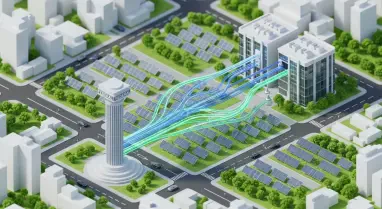

Historically, technology was introduced to the enterprise through a top-down approach, where software was vetted by IT, deployed through formal training, and monitored via centralized administrative consoles. This clear-cut separation allowed leaders to treat technology as an external implement used for specific tasks. However, contemporary AI has transcended this definition, embedding itself directly into the sequence of cognitive work. It no longer functions as a standalone application but as a pervasive layer that pre-drafts communication, scaffolds complex code, and interprets data before a human ever sees the raw input.

This shift means that the fundamental unit of risk is no longer the isolated AI model or its specific output, but rather the entire reconstructed workflow. When AI acts as an operating layer, it subtly shifts the allocation of responsibilities and the depth of human scrutiny applied to every outcome. High-performing teams are frequently moving faster than formal procurement cycles, integrating autonomous agents to handle repetitive functions without waiting for executive approval. Consequently, the architecture of how work gets done is changing organically, often bypassing the very dashboards designed to monitor corporate health.

What are the Primary Failure Modes in Current Leadership Responses to AI?

Organizational leadership typically falls into one of two damaging extremes when faced with the rapid, decentralized adoption of AI technologies. The first failure mode involves a defensive posture characterized by overly restrictive policies and total bans on unapproved tools. While intended to mitigate risk, this approach often pushes productive experimentation underground, creating a “shadow AI” ecosystem. When employees feel they must hide their most efficient methods to avoid reprimand, governance becomes impossible, and management is left with a false sense of security while invisible vulnerabilities multiply across the company.

Conversely, the second failure mode is one of strategic neglect, where widespread adoption is permitted without any formal framework for accountability. In these environments, the complexity of the business grows unchecked as decisions that once required nuanced human judgment are quietly outsourced to automated systems. The most significant danger here is the erosion of the audit trail; if an AI-generated decision leads to a catastrophic failure, the lack of a formal oversight structure makes it impossible to pinpoint where the human-in-the-loop failed. This unmanaged complexity eventually manifests as a significant long-term liability that can derail even the most successful enterprise.

Why is Measuring Workflow Penetration More Critical Than Tracking Software Licenses?

Standard IT metrics often focus on seat counts and license utilization, but these figures provide a misleading picture of how AI is actually influencing business outcomes. To achieve true operational intelligence, leaders must look beyond the sanctioned software list and investigate which specific workflows have been fundamentally altered. This requires a shift toward qualitative assessments, such as departmental surveys and process mapping, to identify where AI-generated content is feeding directly into critical business decisions. Understanding the depth of this penetration allows the board to see the “invisible” work being done by agents and automated scripts.

Moreover, identifying these patterns helps leadership detect “governance gaps” before they result in security breaches or regulatory infractions. By analyzing where employees are reaching for external tools to solve internal inefficiencies, a Chief Information Officer can identify unmet needs within the sanctioned tech stack. This proactive approach transforms governance from a purely punitive function into a strategic partner that enables safer, more efficient ways of working. Instead of just asking if the company is in compliance, the focus moves toward understanding how the very nature of the company’s value proposition is being reshaped by automated assistance.

How Should Organizations Manage the Newfound Capacity Created by AI Efficiency?

One of the most overlooked aspects of the AI transition is the sudden generation of organizational capacity, or the “saved time” resulting from increased throughput. When a sales team uses AI to produce proposals in half the time, they have effectively gained open bandwidth that rarely shows up on a traditional financial statement in its early stages. This delta between old and new durations of tasks represents a significant strategic asset that is currently being allowed to evaporate into wasted time or unguided activity. Boards are frequently failing to ask the most important strategic question: what is being done with the hours that AI has liberated?

Properly managing this capacity conversion is essential for maintaining a competitive edge and satisfying regulatory scrutiny, especially in high-stakes industries like healthcare or finance. Regulators are increasingly interested in whether efficiency gains have come at the cost of necessary human oversight. By tracking how this reclaimed time is reinvested—whether into margin expansion, deeper research, or more intensive quality control—leaders can demonstrate to stakeholders that AI is being used to enhance, not replace, the integrity of their operations. This level of visibility ensures that the organization remains lean and focused, rather than merely faster.

Summary: Redefining the Governance Framework

The transition toward a sophisticated AI governance model required a departure from monitoring static systems toward analyzing dynamic operational flows. Successful organizations recognized that model risk was only a small fraction of the total exposure, shifting their focus instead to the evolution of human-AI collaboration. By implementing frameworks that prioritized workflow penetration and human accountability signals, leadership teams were able to identify where judgment was being outsourced and where it needed to be reinforced. This move from passive reporting to active operational intelligence allowed for a more nuanced understanding of how technology was rewriting the corporate DNA.

Effective management also hinged on the ability to capture and redirect the capacity generated by automated efficiencies. Rather than allowing time savings to go unmanaged, proactive leaders converted these gains into strategic advantages, ensuring that every hour saved by a machine was reinvested into high-value human activity. This approach not only satisfied the increasing demands of regulators but also provided the board with a clear view of the return on AI investments. The ultimate takeaway was that visibility is the prerequisite for control; without a clear view of how work is changing, governance remains a purely theoretical exercise.

Final Thoughts: The Path Forward for Human-Centric Innovation

As enterprises continue to integrate autonomous systems, the focus must now shift toward the long-term sustainability of the human-AI partnership. The next logical step for leadership is to institutionalize a culture of “governed experimentation,” where employees are encouraged to find efficiencies within a transparent framework that rewards accountability rather than just speed. This involves creating internal “sandboxes” where new workflows can be tested and validated before being scaled across the organization, ensuring that the human element remains the definitive check on automated output.

Furthermore, boards should consider the implementation of an “Accountability Index” that measures the frequency and quality of human interventions in AI-assisted processes. By treating human judgment as a measurable resource, companies can protect themselves against the risks of organizational blindness and ensure that strategic intent is never lost to the ether of automation. The goal is to move beyond a defensive posture of risk mitigation and toward a future where AI acts as a sophisticated partner that amplifies human potential without compromising the values or safety of the institution. Balancing this technological surge with rigorous human oversight will be the defining challenge of the coming years.