The flickering lights on a server rack used to represent simple connectivity, but today they signify the heartbeat of an autonomous entity that breathes, learns, and recalibrates every millisecond. For decades, IT leaders viewed the data center as a high-tech warehouse—a static facility where the primary goal was keeping the lights on and the racks cool. This historical perspective treated infrastructure as a passive container for hardware, where success was measured by uptime and physical security. However, as AI agents begin to make autonomous decisions and workloads shift across a global tapestry of cloud and edge nodes, the traditional “building” metaphor has collapsed. Today, the data center has transitioned from a silent repository of servers into a high-speed control system that must regulate itself in real-time or risk spiraling into digital chaos.

This metamorphosis is driven by the reality that modern digital operations have outpaced human reaction times. In the current landscape of 2026, the data center is no longer a destination; it is a distributed engine of execution that spans multiple geographic regions and service providers. The nut graph of this transformation lies in the shift from managing “stuff” to managing “behavior.” Because software now possesses the agency to change its own parameters, the infrastructure supporting it must act as a governor, ensuring that autonomous actions remain within the boundaries of business intent. Failing to recognize this shift means operating with a blind spot that invites catastrophic systemic drift.

The End of the “Set It and Forget It” Infrastructure Era

The era of “set it and forget it” infrastructure was built on the assumption that a system, once configured, would remain in a known-good state until a human operator decided otherwise. This approach functioned well when applications were monolithic and updates occurred on a quarterly basis. In that environment, the data center was a fortress, and the configuration was the law. However, the rise of containerized microservices and ephemeral workloads changed the fundamental physics of the data center. Infrastructure is now a living organism that fluctuates in size, location, and composition based on the demands of the applications it hosts.

As AI-native architectures become the standard, the behavior of these systems is no longer predictable through a simple review of the source code. Instead, system behavior is shaped by shifting data patterns, user interactions, and environmental context. This transition means that a data center configured perfectly at noon could be fundamentally out of alignment by one o’clock due to the autonomous scaling of a machine learning model. The old model of periodic audits and static settings cannot keep pace with a system that evolves every second. To survive, organizations must move toward a model where the infrastructure is as dynamic as the software it supports.

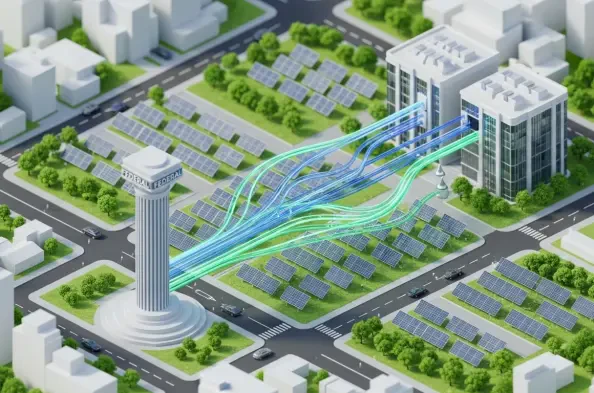

Furthermore, the “building” metaphor has been replaced by the concept of a “control system” because the primary challenge is no longer about housing hardware but about steering intent. When thousands of autonomous agents are making micro-decisions regarding data routing, resource allocation, and security protocols, the data center must function as a regulatory layer. It is the invisible hand that ensures these agents do not consume excessive power, violate privacy constraints, or create bottlenecks. This shift requires a departure from the mindset of maintenance and an adoption of the mindset of constant, real-time regulation.

Why the Traditional Facility Model Is Obsolete

Historically, infrastructure was managed through fixed settings and assuming that a system configured correctly on day one would remain compliant on day one hundred. This deterministic model relied on the belief that if you controlled the inputs and the environment, the outputs would remain stable. But modern environments span public clouds, private stacks, and edge deployments, creating a level of fragmentation where traditional perimeter-based security no longer applies. The complexity of these distributed systems creates “dark corners” where configuration drift can hide for months, slowly eroding the integrity of the entire stack.

The breakdown of static configuration is particularly evident in the way security is handled. In the old facility model, security was a fence around the data center. In 2026, the data center has no fence; its components are scattered across the globe. Traditional security audits, which occur at a single point in time, are insufficient because they fail to capture the transient risks that emerge during execution. When a workload moves from a secure on-premises server to a third-party edge node in response to a spike in traffic, the risk profile changes instantly. Static models simply cannot account for this level of fluidity.

Moreover, the shift from deterministic to stochastic systems marks the final nail in the coffin for the facility model. With AI-native architectures, the path a request takes through the system is no longer a straight line defined by a developer. It is a probabilistic outcome influenced by millions of variables. In such a world, the idea that a manager can “set” the behavior of a data center is an illusion. The infrastructure must instead be designed to handle uncertainty, moving away from rigid rules toward flexible guardrails that can interpret and enforce intent regardless of how the underlying components are arranged.

Decoding the Anatomy of a Modern Control System

To understand the modern data center, one must look toward the principles of control theory, which have long governed complex engineering feats like chemical plants and aerospace systems. In these fields, a control system continuously monitors behavior against a desired intent and intervenes during execution to prevent errors. Computing is finally adopting this rigor. The infrastructure is no longer just “where” the work happens; it is the “how” and the “why.” This shift toward real-time steering allows organizations to manage massive scale without needing a proportional increase in human oversight.

The most significant threat in this new paradigm is the crisis of “silent failures.” These occur when dashboards stay green and systems report that they are operational, yet the actual outcomes diverge from the business’s ethical or operational intent. For example, an automated pricing system might follow all its technical parameters while inadvertently violating fair-trade regulations. Traditional monitoring tools, which focus on uptime and latency, are blind to these subtle drifts in logic. A true control system, however, monitors the logic of the decision-making process itself, flagging deviations before they result in a public or financial crisis.

A critical architectural change in this evolution is the separation of the control plane from the data plane. Learning from the success of software-defined networking, modern data center architectures are decoupling the “muscles” of execution from the “nervous system” of authority. The data plane handles the heavy lifting—moving bits and running calculations—while the control plane acts as the brain, assessing every action against a library of policies and constraints. By moving the “authority” out of the application and into a centralized control system, organizations ensure that governance is consistent, no matter where the actual computing takes place.

Expert Perspectives on Runtime Authority and Governance

Emerging risk management frameworks, particularly those influenced by the National Institute of Standards and Technology (NIST), are prioritizing ongoing lifecycle governance over one-time certifications. Experts in the field argue that the traditional “approval” phase of a project is now the least important part of its lifecycle. What matters most is “runtime authority”—the ability of the system to verify, in the moment of execution, that an action is safe and compliant. This shift acknowledges that the context of a decision is often more important than the code that produced it, making real-time oversight a non-negotiable requirement.

There is also a growing mandate for explainability within these autonomous systems. Industry leaders emphasize that it is no longer enough for a system to report its results; it must be able to explain the specific logic behind every autonomous decision. When a data center control system intervenes to stop a process, it must provide a transparent audit trail showing which policy was triggered and why. This level of granularity is essential for building trust with regulators and stakeholders who are increasingly wary of “black box” algorithms making critical business choices. Without explainability, the speed of modern infrastructure becomes a liability rather than an asset.

Cloud-native evolution has provided the blueprint for this transition. The success of global cloud providers was not just built on cheap hardware, but on the ability to move policy enforcement into a shared, centralized control system. By abstracting the governance layer, these providers allowed developers to move fast without breaking the rules. Enterprise data centers are now adopting this same philosophy, creating a “policy as code” environment where the rules of the business are woven into the very fabric of the infrastructure. This allows the CIO to transition from being a gatekeeper who slows things down to a regulator who enables safe, high-speed innovation.

Strategies for Transitioning to a Control-System Architecture

Transitioning to a control-system architecture requires a fundamental shift in how risk is managed. Instead of risk assessments living in static design documents, they must become live system properties that trigger alerts or interventions during execution. This runtime risk management ensures that the system is always operating within its “safe zone.” When a workload begins to exhibit behavior that suggests a security breach or a policy violation, the control system can automatically throttle that workload or redirect it to a “sandbox” for further investigation, all without human intervention.

Establishing dynamically enforced envelopes is another crucial strategy. These are essentially digital “guardrails” that allow AI and autonomous systems to operate freely until they hit pre-defined boundaries. These boundaries might be based on cost, data privacy, or ethical constraints. For instance, an AI agent might have the authority to purchase cloud capacity as needed, but only until it reaches a specific budget threshold or if the capacity is sourced from a green energy provider. By defining these envelopes, the organization provides the system with the autonomy it needs to be efficient while maintaining the control necessary for safety.

The ultimate goal of this architectural shift is to redefine the role of the CIO. In the past, the CIO was responsible for providing capacity—ensuring there were enough servers and storage to meet demand. In the era of the dynamic control system, the CIO becomes a digital regulator. The focus shifts from providing the “how” of compute to governing the “what” of behavioral outcomes. This involves setting the high-level logic that the control system will enforce, ensuring that the entire distributed infrastructure acts as a single, coherent entity that reflects the values and objectives of the organization.

The evolution from a static facility to a dynamic control system represented a necessary response to the overwhelming complexity of modern digital life. Organizations realized that human-speed governance was incompatible with machine-speed execution. The transition necessitated a fundamental shift where the infrastructure was no longer a passive observer but an active participant in the governance of applications. Forward-thinking leaders prioritized the separation of authority from execution, ensuring that every autonomous decision remained tethered to human intent. By the time the transition was complete, the data center had moved from being a cost center to a vital regulatory organ of the digital enterprise. The focus was no longer on keeping the servers running, but on keeping the systems bounded, explainable, and correctable in the face of constant change. This new architectural foundation allowed businesses to embrace the full potential of AI agency without sacrificing safety or accountability. Leaders who mastered this shift positioned their organizations to thrive in an environment where the only constant was the need for real-time, intelligent control.