The rapid evolution of high-density AI environments has transformed the data center from a simple collection of server racks into a highly coupled, complex system where power, cooling, and workload placement are inextricably linked. As energy demands are projected to surge and physical constraints tighten, traditional siloed management is becoming obsolete. Our guest today is a leading authority on data center infrastructure who advocates for the implementation of semantic digital twins to move beyond “tribal knowledge” and toward computable, verifiable governance.

Throughout our discussion, we explore how the integration of machine learning and semantic modeling allows organizations to treat the data center as a coherent dependency graph. We delve into the shifting definitions of capacity, the necessity of layered safety protocols in autonomous cooling, and the practical steps for integrating facilities and IT teams under a single source of truth.

Data center management is shifting from independent tuning to a coupled system where power, cooling, and workload placement are inextricably linked. How do you identify when local optimizations are causing global failures, and what specific steps can teams take to move beyond tribal knowledge during technical planning?

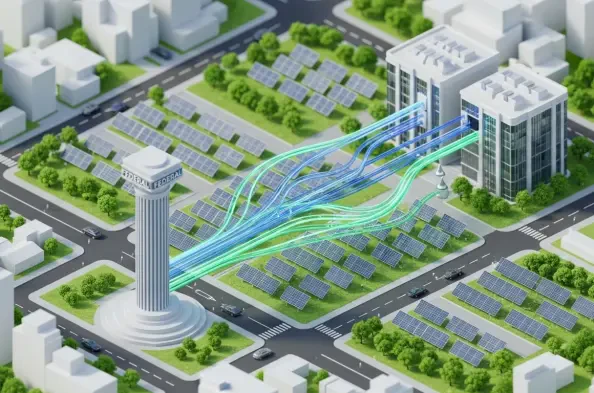

In a coupled system, you realize local optimizations are failing when you have plenty of free rack units but absolutely nowhere safe to place a new workload. This happens because those available racks might be behind the wrong power path or sit inside a cooling envelope that is already at its physical limit. To move beyond tribal knowledge, teams must implement a semantic digital twin that treats the data center as a dependency system where physical and logical “things”—from chillers to GPU clusters—are mapped by their relationships. We move from “negotiated guesswork” to technical planning by codifying rules, such as ensuring a workload is only placed where power, cooling, and redundancy constraints are simultaneously satisfied. By forcing these dependencies into an explicit, computable model, we stop relying on the memory of senior technicians and start relying on verifiable data.

With data center energy consumption potentially doubling by 2030 and rack densities rapidly increasing, how should organizations redefine the term “capacity”? What specific metrics beyond simple rack units are now essential for maintaining operational resilience as these physical envelopes tighten?

The old metric of counting empty floor tiles or rack units is becoming irrelevant when the International Energy Agency projects consumption could hit 945 terawatt-hours by 2030. Organizations must redefine “capacity” as a multi-constraint resource that accounts for power headroom on a specific circuit, cooling headroom in a particular zone, and the remaining UPS margin under a redundancy policy. We are seeing seven-to-nine kilowatt racks become more common, replacing the traditional four-to-six kilowatt standard, which means the “physical envelope” is the primary constraint. Operational resilience now requires us to look at “placeable capacity,” which is a real-time calculation of whether a specific location can support a high-density load without breaching the safety envelope of the surrounding infrastructure.

Machine learning can significantly reduce cooling energy, yet autonomous control requires layered safeguards like uncertainty estimation. How do you implement a two-layer verification system between cloud-side AI and on-site controls, and what protocols ensure an operator can safely exit back to conventional automation?

Implementing this requires a clear architectural split where the cloud-based AI acts as the “intelligence” and the on-site system acts as the “guardian.” Every five minutes, the AI pulls a snapshot from thousands of sensors to predict the best energy-saving actions, but these are merely proposals until they pass through a local verification layer. We use uncertainty estimation to automatically discard any AI recommendation that has low confidence, ensuring we never gamble with the physical plant. Crucially, we maintain a protocol for an operator-controlled exit, which allows a human to instantly flip a switch and return the facility to conventional, rule-based automation if the AI’s behavior deviates from expected parameters. This layered approach is what allowed sites to see up to a 40% reduction in cooling energy while maintaining 100% physical safety.

Facilities and IT teams often use the same terms, like “redundancy” or “maintenance,” to mean very different things. How does a semantic digital twin formalize these definitions into a computable policy, and what are the practical challenges of integrating unstructured artifacts like runbooks into a knowledge graph?

The semantic twin acts as a translator that forces implicit disagreements into explicit, machine-readable definitions. For instance, while a facilities team sees “maintenance” as a ticket in a work order system, the twin links that ticket to the specific cooling loop it affects, identifying exactly which GPU nodes are at risk. The primary challenge is anchoring unstructured data—like PDF runbooks, wiring diagrams, and logs—to the digital entities they describe within a knowledge graph. This requires building an ontology that formalizes how things relate, so that when a “redundancy” policy is queried, the system knows if it means “two power feeds” or “application-level survival.” Once these artifacts are connected to the graph, we eliminate “confident nonsense” and ensure that every team is looking at the same operational truth.

Disaster recovery plans often focus on application replication while overlooking physical limits like power headroom or cooling zones at the secondary site. How can a dependency graph transform these exercises into real-time checks, and what step-by-step process ensures a failover site can actually handle redirected loads?

A dependency graph turns a disaster recovery exercise from a static spreadsheet into a live query of the physical substrate. To ensure a failover site is actually ready, the process must begin by traversing the graph to check if the secondary site’s power headroom can handle the sudden megawatt surge of redirected AI workloads. Next, the system must verify that the specific cooling zones at the secondary site aren’t currently compromised by a maintenance window or a localized hardware failure. Finally, we validate the redundancy state of the target racks to ensure we aren’t moving a critical workload into a “single-string” power environment. This transformation ensures that “can we shift this?” is answered by physics and current operational state rather than by an outdated document.

Organizations often struggle with where to begin when building a semantic core for their infrastructure. Which specific domain slice—power, cooling, or placement—offers the most immediate return on investment, and how do you ensure that an AI operations copilot stays grounded in verifiable operating facts?

The most immediate return on investment comes from focusing on the intersection where power, cooling, and workload placement meet, as this is where the most expensive “stranded capacity” usually hides. By modeling this specific slice first, organizations can immediately optimize how they densify their existing racks without risking a thermal event. To keep an AI copilot grounded, we use the semantic twin as a verifier rather than an autopilot; any recommendation the AI makes must be gated by the twin’s provenance and change control rules. This means the AI can suggest a move, but the semantic layer checks it against the actual meter readings and maintenance logs to ensure the action is defensible. This setup provides hybrid intelligence: the machine learning handles the forecasting, while the semantic core ensures the decisions are safe and explainable.

What is your forecast for AI data center optimization?

My forecast is that the competitive advantage in the AI era will shift away from who can buy the most GPUs and toward who can most effectively model their data center as a computable dependency graph. As energy becomes the absolute growth limiter for the enterprise, we will see a move toward “semantics as infrastructure,” where the ability to define the data center in a machine-readable way becomes a prerequisite for operational survival. Organizations that continue to manage their facilities through gut feel and disconnected dashboards will face compounding costs and surprise failures, while those with a semantic core will be able to run their hardware at much higher utilization rates. Ultimately, the data center will no longer be seen as a “building” but as the enterprise’s most critical and expensive software-defined system.