The rapid integration of generative artificial intelligence into the software development lifecycle has created an environment where code is authored at a speed that vastly outpaces the human capacity to validate it. While engineering departments in 2026 report record-breaking numbers of pull requests and automated commits, the actual delivery of functional value to end users has often plateaued or even regressed. This phenomenon is known as the AI Productivity Paradox, a state where the removal of the initial coding bottleneck has simply pushed the pressure further down the pipeline into testing, integration, and security auditing. Organizations that once struggled to find enough developers to write logic now find themselves buried under a mountain of syntactically correct but contextually unaware code that requires exhaustive human intervention. This shift has turned the development process into a high-flux environment that traditional management frameworks were never designed to handle, resulting in teams that are perpetually exhausted despite having the most powerful tools in history at their disposal.

The fundamental issue lies in the fact that software delivery is a cohesive system rather than a series of isolated tasks, and optimizing a single stage—writing code—does not inherently improve the throughput of the entire machine. For decades, the industry operated under the assumption that the “keyboard time” required to draft functions and interface with APIs was the primary constraint on innovation. However, with large language models now capable of generating complex boilerplates and logic structures in milliseconds, the constraint has migrated to the verification phase. Engineering leaders are observing a surge in activity metrics, yet these “vanity metrics” often mask a lack of progress in actual product stability. The real work has shifted from the act of creation to the act of forensic analysis, where senior developers must meticulously deconstruct AI-generated contributions to identify subtle logic flaws that could lead to catastrophic production failures if left unchecked.

The Migration of Development Bottlenecks

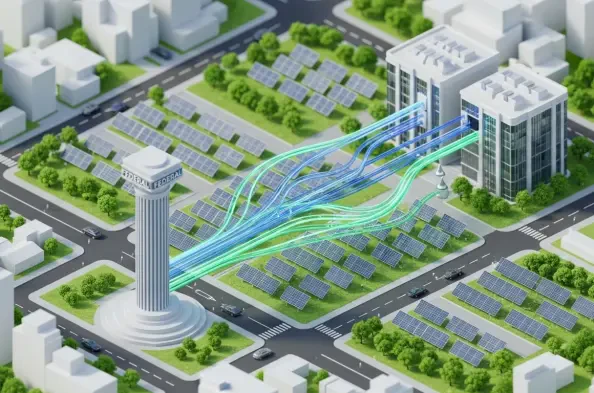

The transition from manual coding to AI-augmented production has fundamentally altered the geography of the software delivery pipeline, turning once-minor steps into massive operational chokepoints. When a developer can prompt a model to generate an entire microservice in a matter of minutes, the volume of code entering the repository increases by orders of magnitude, yet the infrastructure for continuous integration and deployment remains tethered to physical and cognitive limits. This influx creates a “noise-to-signal” problem where the sheer quantity of new code obscures the meaningful updates, making it difficult for teams to distinguish between transformative features and low-value iterations. Consequently, the stages of integration and automated testing have become the new primary constraints, as build servers and test suites struggle to keep pace with the relentless velocity of automated code generation.

Furthermore, the cost of downstream failure has escalated because AI-generated code is frequently produced in a vacuum, lacking the holistic awareness of how disparate components interact under load. Errors that used to be caught during the slow, deliberate process of manual drafting are now manifesting much later in the cycle, often during complex integration tests or, worse, post-deployment. This shift makes mistakes significantly more expensive to remediate, as a flaw discovered in a staging environment requires tracing back through thousands of lines of machine-generated logic. The result is a cycle of “rework” that negates the initial time savings gained during the generation phase, leaving developers in a state of perpetual motion that yields very little finished product. This creates a deceptive sense of productivity where the team feels they are moving faster because they are constantly busy, while the actual shipping dates continue to slip further into the distance.

Navigating the Hidden Costs of the Integration Tax

A significant driver of the current slowdown is the “Integration Tax,” which represents the immense effort required to align AI-generated logic with the idiosyncratic realities of established enterprise architectures. Modern software systems are rarely clean slates; they are intricate webs of legacy code, specialized internal tools, and third-party dependencies that possess decades of “hard-won knowledge” and obscure edge cases. While a generative model can produce a functional payment processing script based on public documentation, it cannot inherently know that a specific internal database requires a unique header or that a legacy API fails if a certain undocumented latency threshold is exceeded. This lack of deep institutional context forces senior engineers to spend hours or even days “wrestling” with AI output to make it compatible with the existing environment, effectively turning a “ten-second” coding task into a multi-day integration project.

Beyond the immediate technical friction, the perceived “cheapness” of generating code often obscures the long-term maintenance debt that such volume incurs. Every new line of code added to a system expands its total surface area, creating more potential points of failure and increasing the complexity of future updates. When AI makes it effortless to add features or abstractions, teams are tempted to over-engineer solutions, leading to “complexity inflation” where the system becomes too large for any single human to fully comprehend. This ballooning architecture requires more monitoring, more frequent security patches, and more extensive documentation, all of which demand human time that is already in short supply. The result is a workforce that is increasingly occupied with managing the overhead of their own creations rather than building new capabilities that drive business value.

Complexity Inflation and Operational Cognitive Load

The operational burden of managing a high-volume, AI-augmented codebase extends far beyond the development environment and into the long-term health of the production infrastructure. As teams utilize generative tools to rapidly deploy new microservices and configurations, the cognitive load required to maintain these systems grows exponentially. While an AI can suggest a sophisticated architectural pattern that appears efficient on paper, it often introduces subtle dependencies that become liabilities during a high-pressure production incident. When a system failure occurs at an inconvenient hour, the difficulty of troubleshooting is compounded if the on-call engineer is forced to navigate “clever” or highly abstracted logic that was not authored by a human colleague but by an algorithm. This disconnect between the author and the maintainer creates a dangerous knowledge gap that can extend recovery times and increase the risk of secondary outages.

Moreover, the ease of code generation has led to a proliferation of “innocent complexity,” where small, unnecessary additions to the codebase accumulate into a massive technical debt. In an era where writing code is practically free, there is less incentive to pursue minimalist or highly optimized solutions, leading to a “bloat” that impacts system performance and developer experience. Each additional dependency or configuration layer introduced by an AI requires ongoing attention, ensuring that it remains compatible with evolving security standards and platform updates. This constant need for maintenance means that a significant portion of a team’s capacity is redirected away from innovation and toward the preservation of an increasingly fragile ecosystem. The paradox is that the more code a team produces, the more they are anchored by the weight of their previous output, leading to a plateau in actual delivery speed.

The Human Crisis in Code Review and Oversight

The unprecedented surge in code volume has transformed the code review process into the most significant operational hurdle within the modern development department. Human capacity for deep logical auditing remains a finite resource, yet senior engineers are now being inundated with pull requests that are both larger and more frequent than those seen in the pre-AI era. This creates a state of chronic “reviewer fatigue,” where the individuals responsible for maintaining system integrity are forced to choose between thoroughness and speed. When engineers are overwhelmed, the quality of oversight inevitably declines, increasing the likelihood that subtle security vulnerabilities or performance regressions will slip into the master branch. In high-stakes sectors like financial services or healthcare, the potential consequences of these missed defects can be devastating, far outweighing any perceived gains in initial development velocity.

This crisis of oversight is exacerbated by the fact that traditional productivity metrics often reward the very behavior that causes the bottleneck. Metrics such as “story points completed” or “lines of code committed” provide a distorted view of success in an AI-driven landscape because they prioritize quantity over the actual delivery of reliable value. When management teams celebrate a high volume of commits without considering the “change failure rate,” they inadvertently encourage developers to flood the pipeline with unverified AI output. This misalignment of incentives creates a culture of “busywork” where the appearance of activity is valued more than the stability of the final product. To restore efficiency, organizations must pivot toward metrics that measure the flow of work through the entire system, ensuring that the human element of the review process is treated as a vital safeguard rather than an obstacle to be bypassed.

Shifting Metrics Toward Reliable Value Delivery

To resolve the paradox of being busy but not fast, engineering leadership must fundamentally redefine what it means to be productive in a world where code generation is automated. Traditional benchmarks that once served as reliable proxies for progress have become obsolete; for instance, a team that triples its code output but sees no increase in production deployments is not more productive, but merely more wasteful. Leaders must instead prioritize “Deployment Frequency” and “Lead Time for Changes” as the ultimate measures of success, focusing on how quickly a concept moves from an idea to a live feature in the hands of a user. By emphasizing the speed of the entire pipeline rather than the speed of individual contributors, organizations can identify where work is getting stuck and redirect resources to clear those specific bottlenecks.

Another critical “honesty metric” is the “Change Failure Rate,” which tracks the percentage of deployments that result in a service impairment or require a rollback. If an increase in AI-assisted velocity is accompanied by a spike in production incidents, the organization has not improved its efficiency; it has simply accelerated its ability to create defects. High-performing teams in 2026 are those that balance their use of generative tools with rigorous automated testing and human-led architectural reviews. By normalizing business impact—such as customer conversion rates or system uptime—against the volume of code produced, leaders can ensure that their teams are focused on “Feature Impact Density.” This approach encourages developers to evaluate whether a new piece of logic is truly necessary or if it is merely adding to the maintenance burden, fostering a culture of disciplined innovation rather than reckless expansion.

Strategic Governance and the Future of Engineering Leadership

Overcoming the AI Productivity Paradox required a fundamental shift in how engineering leaders managed their most valuable and scarce resource: human judgment. By 2026, successful organizations moved away from the “more is better” philosophy and instead implemented strategic governance models that prioritized quality and system flow. This transition involved deploying “AI to police AI,” utilizing specialized automated tools to perform initial security audits, summarize massive pull requests, and flag deviations from established architectural standards. These tools acted as a first line of defense, filtering out low-quality output and allowing senior engineers to reserve their limited cognitive energy for high-risk decisions and complex domain-specific logic. This shift was essential for maintaining a sustainable pace of development without sacrificing the safety of the production environment.

The path forward for software teams involved the curation of “domain context” as a primary internal product, ensuring that generative tools were fed the specific standards, naming conventions, and legacy constraints of the organization. This reduced the “Integration Tax” by helping AI models produce code that was more likely to be compatible with existing systems on the first attempt. Additionally, teams enforced strict guardrails on work-in-progress, such as limiting the size of pull requests and the number of open tasks, to prevent the pipeline from becoming overwhelmed. By treating software delivery as a holistic system and focusing on the reliability of the flow rather than the sheer volume of output, engineering leaders transformed the potential of artificial intelligence into actual, measurable progress. The ultimate lesson learned was that in an era of automated code, the ability to ship less, but ship it more reliably, became the true hallmark of a high-speed organization.