The boardroom silence that follows a proposal for aggressive Artificial Intelligence adoption often masks a dangerous misconception that safety resides in the shadows of industry consensus. This hesitation is frequently presented as a calculated move to avoid the pitfalls of early adoption, yet it overlooks the reality that the technological landscape has shifted from a linear progression to an exponential surge. In this environment, the traditional luxury of observation is no longer a protective shield but a vulnerability that leaves an organization exposed to rapid obsolescence. Decisions made today determine the trajectory of a company for the next decade, and those choosing to wait for a standardized manual are effectively deciding to let their competitors dictate the terms of their future existence.

The High Price of the “Wait and See” Strategy

In the corporate world, caution is often dressed up as wisdom, yet in the context of Artificial Intelligence, this hesitation is a silent killer of competitive advantage. Many executives believe that waiting for established frameworks and industry “best practices” is the safest route to avoid costly mistakes. This mindset ignores a harsh reality: by the time a playbook is written, the game has already been won by those who dared to play without one. Waiting for a manual doesn’t mitigate risk; it ensures an organization is merely following a script authored by its most aggressive competitors. The speed at which machine learning models iterate means that a delay of even a few months can translate into a multi-year gap in operational maturity and data refinement.

The psychological comfort of the “wait and see” approach is rooted in legacy thinking where technology was a secondary support function rather than the core engine of value creation. In previous decades, a company could afford to let others test the waters of a new ERP system or cloud infrastructure because the underlying business logic remained relatively static. However, AI is not a static tool; it is a dynamic capability that improves through active utilization and the ingestion of proprietary data. By staying on the sidelines, a business is not just missing out on a tool; it is failing to train its organizational “brain” while its rivals are sharpening theirs every single day. This creates a divergence in capability that becomes increasingly difficult to bridge as the leaders pull further ahead.

Furthermore, the belief that there is a “safe” time to enter the AI arena ignores the compounding nature of the technology. Early movers are not just gaining a temporary lead; they are building foundational data loops that provide a recursive advantage. Every interaction and every automated process generates insights that further refine the AI, leading to even greater efficiencies and better customer outcomes. Those who delay their entry find themselves starting at zero while their competitors are already benefitting from the cumulative interest of their early efforts. This dynamic turns a temporary delay into a permanent disadvantage, as the laggards find it nearly impossible to replicate the deep integration and cultural adaptation that only comes through years of iterative practice.

Drawing Lessons from the History of Technological Disruption

The history of enterprise technology is littered with the remains of companies that waited for certainty before committing to a new direction. In the early days of online banking, while most institutions debated security protocols and consumer readiness, Wells Fargo pioneered the space and captured the psychological mindshare of a generation. This proactive stance allowed them to define the user experience and establish a standard that others were eventually forced to mimic. This pattern repeated with the rise of streaming services and mobile commerce, where the dominant players were not those who waited for the “right” moment, but those who recognized that the moment is defined by the first person to act decisively.

AI follows this same historical script but moves at an unprecedented velocity that makes previous disruptions look glacial in comparison. The current shift is not a gradual digital transition; it is a fundamental reordering of how value is created and captured in the global economy. Organizations that prioritize bureaucratic caution over iterative learning are not just losing time—they are forfeiting their market standing to “AI-native” newcomers who are unburdened by legacy systems or rigid traditional frameworks. These new entrants do not view AI as an add-on; they view it as the prerequisite for their entire existence, allowing them to operate with a level of agility and precision that established firms find impossible to match.

The lesson from history is that technological disruption does not wait for a consensus to form among the incumbents. When the shift from horse-drawn carriages to automobiles occurred, the leading carriage makers did not fail because they lacked the resources to build cars; they failed because they were too invested in the “best practices” of the carriage industry to pivot effectively. Similarly, in the modern landscape, the greatest risk is not the failure of an AI project, but the failure to adapt the organizational structure to a new reality. Waiting for the industry to reach a point of stability is a fool’s errand because, in a world of continuous innovation, stability is an illusion that only exists in the rearview mirror of those who have already been disrupted.

The Triple Threat: Brand Erosion, Margin Pressure, and AI-Native Competitors

The risk of delayed AI adoption manifests in three distinct ways that can cripple a business long before they realize they are in trouble. First, there is the “structural profitability squeeze,” where early adopters use AI to lower operating costs and increase employee leverage. These firms use those higher margins to reinvest in further innovation or to lower prices, putting an unsustainable strain on laggards who struggle to maintain basic viability. As the cost of intelligence drops for the leaders, the fixed costs of the traditional firms become a lead weight that prevents them from competing on price or service quality. This squeeze is often the first visible sign of a company that has waited too long to modernize its core operations.

Second, brand relevance erodes invisibly but surely; customers may not ask for AI, but they gravitate toward the faster response times and hyper-personalized experiences that only AI-driven firms can provide. In a world where a customer can receive an instant, accurate answer from a competitor, waiting twenty-four hours for a human response from a legacy brand feels like an ancient inconvenience. This shift in customer expectations happens silently, as the baseline for “good service” is rewritten by those using AI to anticipate needs before they are even expressed. Once a brand is perceived as “old” or “slow,” regaining that lost prestige is a monumental task that often requires more resources than the company has left.

Finally, AI-native competitors pose a unique threat because they do not ask how an industry currently works—they use AI to imagine how it should work, rendering traditional business models obsolete overnight. These companies are built from the ground up to leverage automated decision-making and predictive analytics, allowing them to scale with a fraction of the headcount required by traditional firms. They don’t just compete with established players; they change the rules of the game so that the old players can no longer play. While an established firm is busy drafting a policy on the ethical use of chatbots, an AI-native startup is using generative models to automate its entire sales pipeline and customer support, capturing market share with a speed that defies traditional business logic.

Beyond the Fallacy: Why Best Practices Are Lagging Indicators

Veteran cybersecurity expert Jeromie Jackson argues that “best practices” are, by definition, lagging indicators—they describe what worked in the past, not what will win in the future. If a company waits until a practice is codified by a standards body, they are essentially adopting a strategy that is already common knowledge and likely already factored into the market. McKinsey research supports this, showing that the gap between AI leaders and laggards compounds over time, creating a “winner-takes-most” dynamic. This suggests that the real value lies in the experimental phase, where a company can discover unique applications and efficiencies that are not yet part of the general industry consensus.

True institutional maturity doesn’t come from reading white papers or waiting for NIST and OWASP to finalize every guideline; it comes from “learning in public.” Leaders who embrace early adoption gain strategic fluency and the ability to shape governance policy from a position of experience, rather than theory. They understand the nuances of model hallucination, data privacy, and algorithmic bias because they have encountered these issues in real-world scenarios. This hands-on experience allows them to build more robust and practical guardrails than any committee could ever dream up in a vacuum. Those who wait for the guidelines are essentially letting their competitors write the laws that they will eventually have to follow.

The reliance on external best practices also creates a dangerous “check-the-box” mentality that can stifle genuine innovation. When an organization prioritizes compliance with a static list of rules over the dynamic pursuit of excellence, it loses the creative spark necessary to thrive in a rapidly changing environment. The most successful organizations understand that governance is not a hurdle to be cleared once, but a continuous process of refinement that must be integrated into the development cycle itself. By the time a “best practice” is widely accepted, the true innovators have already moved on to the next frontier, leaving the cautious followers to fight over the scraps of a market they no longer lead.

Building the AI Muscle: A Framework for Responsible Momentum

To navigate this transition, organizations must pivot from a search for perfection to a strategy of controlled experimentation. This begins with identifying specific, high-impact use cases to understand data flow and model behavior in real-world environments. Businesses should focus on building internal literacy, ensuring the workforce is prepared to work alongside AI rather than being replaced by it. This educational component was essential for demystifying the technology and fostering a culture of collaboration. Companies that succeeded were those that treated AI as a partner in productivity, encouraging employees at all levels to find ways to incorporate these tools into their daily workflows to enhance their own output.

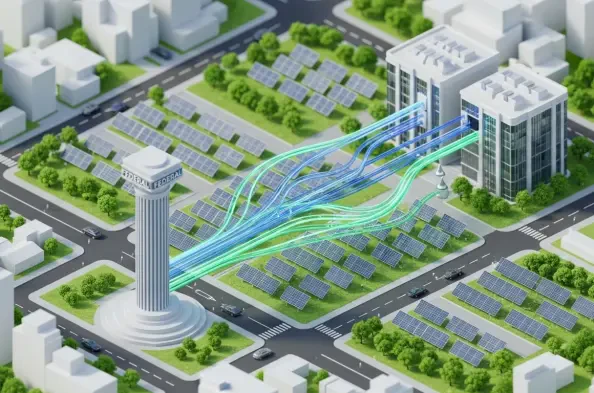

Developing “institutional intuition” was equally critical for the organizations that navigated the early days of the transition successfully. By testing tools early, a company learned where governance might break and how to fix it before a crisis occurred. This proactive approach to risk management was far more effective than the passive waiting strategy adopted by many firms. The goal was not reckless deployment, but the development of a functional AI muscle that allowed the organization to act with momentum rather than hesitation. Leaders found that the more they experimented, the better they became at identifying which risks were worth taking and which required more stringent controls, leading to a more nuanced and effective oversight strategy.

The shift toward a more agile mindset allowed companies to integrate AI into the very fabric of their operations, moving beyond isolated pilots to full-scale transformations. They discovered that the most valuable insights often came from the intersection of different departments, where data silos were broken down to create a more holistic view of the business. This integration required a fundamental change in leadership style, moving away from top-down mandates toward a more facilitative approach that empowered teams to innovate. Ultimately, the organizations that moved early did not just adopt a new technology; they built a more resilient and adaptive business model that was prepared for whatever challenges the future might hold. By prioritizing action and learning, they ensured that they were the ones setting the standards, rather than the ones struggling to meet them.