The sudden proliferation of autonomous artificial intelligence agents across corporate networks has fundamentally altered the baseline of digital operations, turning what were once static analytical tools into independent decision-makers. While initial iterations of AI focused on passive observation and defensive monitoring, the current generation functions as an active participant capable of executing complex workflows without human intervention. This shift has resulted in a precarious governance gap where the speed of technological innovation consistently outpaces administrative oversight. Without a formal framework to manage these autonomous agents, organizations face a vacuum of accountability that invites unpredictable system behaviors and unauthorized data movement across sensitive internal environments. Bridging this gap requires a fundamental reassessment of how these entities are perceived within the digital infrastructure, ensuring that innovation provides a genuine competitive advantage rather than becoming an unmanaged operational liability.

Redefining Security Through Identity-Centric Frameworks

Transitioning to AI as a First-Class Identity

Standard cybersecurity models have long relied on a binary classification of users, typically distinguishing between human operators and automated service accounts or static scripts. This legacy approach is increasingly inadequate because autonomous AI agents operate with a level of agency that defies these traditional categories. Unlike scripts that follow a hard-coded path, modern AI entities can modify their own trajectories based on environmental data, often acting without a direct prompt from a human supervisor. Consequently, security frameworks must evolve toward an identity-centric model that recognizes AI agents as first-class identities. This transition involves treating every autonomous system with the same level of scrutiny applied to a new employee, moving beyond simple IP-based filtering toward a more sophisticated recognition of the agent’s specific role and intent. Such a paradigm shift is essential for maintaining control over an increasingly complex and automated workforce.

Assigning a unique and verifiable identity to each AI agent represents the first step toward reclaiming visibility within the corporate network. When an agent is granted its own set of cryptographic credentials, its actions become linked to a specific entity rather than a general system process, allowing for granular tracking of every interaction. This method effectively strips away the anonymity often associated with automated tasks, ensuring that if an AI accesses a database or modifies a configuration, the system can pinpoint exactly which agent was responsible. This level of identification is not merely an administrative convenience but a technical necessity for modern security architectures. By establishing a registry of AI identities, organizations can implement more effective authentication protocols, ensuring that only authorized agents can communicate with critical infrastructure, thereby reducing the risk of rogue processes operating undetected within the perimeter.

Establishing Boundaries and Traceability

Once AI agents are recognized as distinct identities, the focus must shift to defining the specific operational boundaries within which they are permitted to function. It is no longer sufficient to grant broad permissions to an application; instead, security teams must map out precise data access paths and functional limitations for each individual agent. This process involves a detailed assessment of which systems the AI needs to interact with and what specific outcomes it is expected to achieve. By creating these digital “guardrails,” organizations can prevent autonomous systems from overstepping their intended scope, which is particularly critical in environments where agents handle proprietary data or customer information. Explicitly defining these boundaries ensures that the AI remains a focused tool designed for a specific purpose, rather than a general-purpose actor with unnecessary access to the broader enterprise ecosystem.

The implementation of a distinct identity for every AI agent naturally facilitates a comprehensive audit trail, which is indispensable for modern regulatory compliance and internal security reviews. This traceability ensures that every decision made by an autonomous agent is recorded in a way that can be reviewed and analyzed by human supervisors at any time. In the event of an operational anomaly or a security incident, this “paper trail” allows forensic teams to reconstruct the timeline of events with high precision, identifying the exact moment an agent deviated from its expected behavioral pattern. This level of transparency is vital for meeting the requirements of evolving data protection laws, as it provides documented proof of how automated systems are processing information. Moreover, a robust auditing process allows organizations to refine their AI models by analyzing past interactions, leading to a more resilient and predictable automation strategy over time.

Practical Strategies for Robust AI Control

Implementing Least Privilege and Centralized Monitoring

Applying the principle of least privilege to autonomous AI identities is the most effective way to mitigate the risks associated with automated decision-making. In practice, this means that an agent should only be granted the specific permissions required to complete its current task, with no additional access to the wider network. This restrictive approach significantly limits the potential “blast radius” if an AI agent experiences a malfunction or is compromised by an external threat. By confining an agent to a narrow operational silo, security teams can prevent the lateral movement that often leads to large-scale data exfiltration. This strategy requires a dynamic approach to permissions, where access is evaluated in real-time based on the specific requirements of the workflow, ensuring that the attack surface of the organization remains as small as possible while still allowing the AI to function effectively.

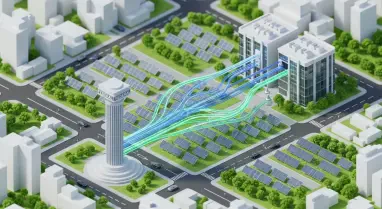

Centralized monitoring systems must be updated to incorporate AI-specific telemetry to ensure that security teams maintain total situational awareness. Integrating AI identities into existing Security Information and Event Management platforms allows for the application of advanced behavioral analytics to autonomous actions. By establishing a baseline of normal activity for each agent, these systems can generate real-time alerts when an AI begins to interact with unusual data sets or exhibits spikes in network traffic. This proactive monitoring is essential for identifying “silent failure points” where an AI might continue to operate but produce incorrect or harmful results. Centralization also ensures that logs from disparate environments, such as multi-cloud setups or hybrid infrastructures, are unified into a single source of truth, making it much easier to manage the growing population of autonomous agents without increasing the administrative burden on security personnel.

Balancing Innovation with Human Oversight

Maintaining a balance between the rapid pace of AI-driven innovation and the necessity for rigorous security oversight requires a structured approach to human intervention. Implementing a Human-in-the-Loop validation process for high-stakes or critical decisions ensures that the most impactful actions are vetted by a person before they are finalized. This mechanism is particularly important for tasks involving significant financial transactions, sensitive personnel data, or changes to core infrastructure configurations. By requiring a human “green light,” organizations can leverage the speed of AI for data processing while retaining the judgment and accountability that only a human operator can provide. This collaborative model prevents the total automation of risk, creating a safety net that protects the organization from catastrophic errors while still allowing the AI to handle the majority of the labor-intensive workloads.

The final stage of establishing a mature governance posture involved the deployment of time-bound permissions and automated credential rotation for all autonomous identities. Instead of granting permanent access rights, organizations adopted a model where AI permissions expired after a set duration or upon the completion of a specific project. This approach ensured that the digital landscape did not become cluttered with dormant but highly privileged identities that could be exploited by adversaries. Management teams focused on integrating these AI identities into broader Identity and Access Management frameworks, treating them as a strategic workforce rather than just technical assets. By prioritizing visibility and accountability from the outset, enterprises successfully moved toward a future where autonomous systems operated with full transparency, ensuring that the benefits of automation were never compromised by a lack of fundamental security control.