The sudden realization that a software application can now navigate a personal computer with the same dexterity as a human user marks a fundamental shift in the relationship between individuals and their digital tools. Anthropic recently unveiled a significant update to its AI assistant, Claude, which empowers Pro and Max subscribers to control their desktop environments remotely using mobile devices through a research preview titled “computer use.” This capability allows the system to execute sophisticated workflows such as synthesizing data from local files, managing complex spreadsheets, and exporting polished presentations without constant human intervention. By integrating specialized connectors like Gmail, Slack, and Google Drive, Claude effectively bridges the gap between static chat interfaces and active operating system management. This development represents a milestone in the evolution of agentic AI, moving beyond text generation toward a model where the AI acts as a functional extension of the user across diverse software ecosystems.

The Technological Shift: Toward Fully Autonomous Agents

The transition toward autonomous agents that can act on a user’s behalf signifies the beginning of what many industry leaders describe as the most transformative era of computing. Jensen Huang, the CEO of Nvidia, has frequently positioned agentic AI as the natural successor to the initial ChatGPT wave, emphasizing that these systems are no longer just conversational partners but active problem solvers. To support this shift, companies are moving rapidly to provide the infrastructure necessary for these agents to operate within enterprise environments safely. Nvidia’s launch of NemoClaw serves as a prime example of this trend, offering a platform designed to supply the security and privacy controls that standard open-source agents often lack. By focusing on enterprise-grade reliability, such platforms allow businesses to explore the potential of autonomous software without the inherent risks of unmanaged experimentation. This systemic move toward agency reflects a broader strategy to embed AI deeply into core business logic.

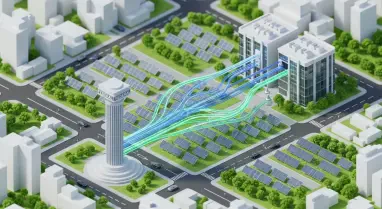

While the promise of increased productivity is driving adoption, the technical execution of computer-level control requires a sophisticated understanding of graphical user interfaces. Claude achieves this by interpreting visual data from the screen, effectively “seeing” the buttons, text fields, and menus that a human operator would use to complete a task. This approach allows the AI to interact with legacy software and local applications that do not have modern API support, extending its utility to virtually any program installed on a workstation. The ability to chain these interactions together into a coherent sequence—such as pulling data from a PDF, entering it into a database, and then sending a summary via an internal messaging app—is what distinguishes agentic AI from simple automation scripts. As these capabilities continue to mature between 2026 and 2028, the expectation is that workflows once requiring hours of manual labor will be condensed into seconds of supervised AI activity.

Security and Governance: Managing the Risks of Autonomy

Despite the impressive technical feats demonstrated by these new AI capabilities, the move toward autonomous desktop control introduces a complex set of security and privacy concerns. Because the system operates by taking frequent screenshots to navigate the environment, it inadvertently captures everything visible on the screen, including sensitive notifications or background documents. Anthropic has proactively advised users to close any files containing personal medical data or financial records before initiating the computer use feature to mitigate the risk of data leakage. Furthermore, while the AI is specifically trained to avoid sensitive activities like facial recognition or unauthorized stock trading, the potential for unintended consequences remains a high-priority topic for cybersecurity professionals. The tension between the desire for seamless automation and the necessity of data sovereignty is creating a cautious atmosphere among early adopters who must now weigh efficiency gains against potential liabilities.

The governance gap remains one of the most significant hurdles for the widespread deployment of autonomous agents within large organizations. A recent study by Deloitte highlighted this discrepancy, revealing that while the vast majority of companies intend to implement AI agents within the next two years, only twenty percent currently possess a mature governance model to manage them. This lack of oversight could lead to scenarios where agents perform actions that violate company policy or inadvertently expose proprietary information to third-party models. To address this, organizations are beginning to implement “human-in-the-loop” protocols, where the AI must request explicit permission before executing high-stakes tasks or accessing restricted directories. Strengthening these internal frameworks is essential for any business that hopes to harness the power of agentic AI without compromising its foundational security posture. Reliability is not just about the AI’s performance but also about the safety of the environment it inhabits.

Strategic Integration: The Path to Operational Excellence

The introduction of computer control for AI assistants necessitated a fundamental reevaluation of how enterprise software ecosystems functioned. Analysts observed a distinct push and pull between AI developers who sought rapid innovation and corporate leaders who prioritized the protection of sensitive data above all else. While some industry observers speculated that autonomous agents might eventually replace traditional Software-as-a-Service offerings, the consensus suggested that such a transformation was still several years away. Established providers maintained their dominance by offering built-in security features that early-stage AI agents could not yet duplicate. To move forward, organizations began focusing on creating hybrid environments where AI agents operated within strictly defined boundaries. Leaders prioritized the development of clear ethical guidelines and technical safeguards to ensure that the adoption of these tools enhanced operational capacity while maintaining strict adherence to privacy standards and regulatory requirements.