The modern Chief Information Officer no longer functions as a mere custodian of internal hardware but instead serves as a high-stakes navigator through an endless storm of digital information that traditional infrastructure was never designed to endure. In an era where every micro-transaction and server log contributes to an insurmountable volume of unstructured noise, the role has fundamentally shifted from managing storage to managing existential risk. This transformation is driven by a necessity to convert overwhelming data streams into precise, actionable intelligence that protects the organization while fueling its growth.

The current challenge centers on the reality that data, once viewed as an asset, can quickly transform into a liability if left unparsed and unprotected. For leadership, the priority has moved toward creating systems that offer more than just raw visibility; they require a mechanism for real-time clarity. Artificial intelligence provides the necessary cognitive layer to distill these massive datasets, ensuring that the burden of information becomes a streamlined engine for decision-making rather than a source of organizational paralysis.

From Data Fatigue to Real-Time Clarity

The relentless deluge of information hitting modern servers creates a state of perpetual fatigue for human analysts and legacy systems alike. When a business generates more logs in a single day than a traditional team could review in a month, the traditional approach to oversight becomes obsolete. This environment demands a shift toward automated synthesis, where AI identifies patterns within the chaos to present a clear picture of operational health. Instead of sifting through a mountain of noise, executives now rely on systems that highlight only the most critical anomalies.

By moving beyond simple aggregation, organizations can prevent data from becoming a graveyard of forgotten insights. AI enables a transition where data quality takes precedence over sheer volume, allowing the CIO to focus on strategic outcomes. This shift ensures that the information flowing through the enterprise acts as a beacon for leadership, providing the clarity required to steer through volatile market conditions with confidence and precision.

Why the Data Strategy Mandate Has Shifted

As digital environments become increasingly identity-centric, the velocity of information flow has officially outpaced human capacity for analysis. This creates a dangerous gap between the raw numbers recorded by systems and the underlying intent behind those actions. Modern risk management requires an understanding of the “why” behind data movements, a task that remains impossible with static dashboards. The mandate has therefore evolved to require a strategy that integrates deep security with comprehensive data synthesis.

The transition from 2026 to 2028 is expected to highlight a widening divide between organizations that treat data as a stagnant pool and those that view it as a dynamic narrative. As businesses operate across decentralized networks, the need for a unified strategy that bridges observability and risk has become the defining challenge for the executive suite. Strategic agility now depends on the ability to interpret complex sentiments and behaviors at the speed of the network itself.

Decoding the AI Advantage: Observability and Synthesis

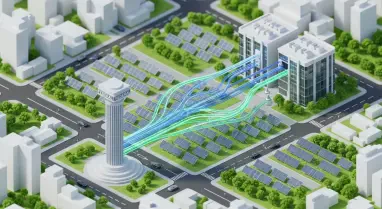

True transformation begins with the implementation of deep observability, where AI systems grounded in identity-based security monitor the intricate interactions between people and data. This level of oversight allows for a sophisticated understanding of access patterns, identifying subtle deviations that might signal a security breach long before any traditional alarm sounds. By focusing on the relationship between users and information, AI provides a comprehensive shield that adapts to emerging threats in real time.

Furthermore, AI bridges the historical divide between structured and unstructured data, which has long been a hurdle for comprehensive analysis. By translating complex business intent into actionable datasets, AI provides a unified narrative that allows leaders to extract critical answers in a matter of minutes. This capability effectively turns years of archival information into a proactive roadmap, ensuring that past data informs future performance rather than merely occupying disk space.

The Synergy of Machine Consistency and Human Intuition

The most effective risk strategies do not seek to replace human professionals but rather to augment their capabilities through machine consistency. While human talent brings essential context and ethical judgment to the table, people are inherently prone to cognitive bias and fatigue when managing tasks at a massive scale. AI, in contrast, remains tireless and consistent, eliminating the traditional trade-off between the volume of data analyzed and the quality of the results.

Expert consensus suggests that a deterministic AI model acts as a powerful force multiplier for existing expertise. By automating the heavy lifting of data synthesis and initial threat detection, AI frees human specialists to focus on higher-order strategic planning and complex problem-solving. This partnership ensures that the organization benefits from both the relentless processing power of technology and the nuanced perspective of experienced leadership, creating a more resilient and efficient operational structure.

Implementing an AI-First Framework for Resilience

To successfully integrate AI into a risk and data strategy, a CIO must look past the novelty of the technology and focus on practical, human-centric application. A robust framework begins with a thorough assessment of existing workflows to identify where automation can provide the most immediate value. Organizations must also proactively address the risk of “shadow AI” by establishing clear governance policies that ensure all tools are trained on relevant, high-quality data that aligns with corporate standards.

Finally, long-term resilience requires a commitment to rigorous testing and continuous evaluation. Implementing A/B testing in live production environments allows teams to verify that AI outputs remain accurate and beneficial to the business objectives. By layering security controls and maintaining a transparent approach to model training, executives ensured that their technological investments provided a secure foundation for future innovation. The path forward required a focus on data hygiene and the establishment of clear ethical guidelines to govern automated decision-making processes. Leaders who prioritized these foundational steps successfully converted their data burdens into competitive advantages, securing their organizations against the uncertainties of a digital-first economy.