The journey toward a fully autonomous industrial landscape requires more than just high-speed connectivity; it demands a fundamental shift in how we perceive the relationship between data, security, and human expertise. As a specialist in data protection and governance, Vernon Yai has spent years navigating the complexities of risk management and the delicate balance of safeguarding sensitive information while driving innovation. In this conversation, we explore how organizations can move beyond the “pilot purgatory” of experimental AI to implement scalable, secure, and intelligent systems that redefine industrial operations.

The discussion delves into the practicalities of the ACT Pathway, the evolution of partner ecosystems like SHAPE 2.0, and the critical need for high-quality vertical data calibration. We also address the human element—how to bridge the talent gap through open-source communities and specialized training—to ensure that the next generation of workers is equipped to manage systems that don’t just process information, but sense and act on it in real-time.

Transitioning from AI pilots to full-scale production often fails due to fragmented systems and data silos. How can organizations effectively assess high-value scenarios among thousands of options, and what specific steps ensure that vertical data is calibrated with enough security to handle critical industrial operations?

The shift from a small-scale pilot to a full production environment is often where the “fragmentation trap” catches most enterprises. To navigate this, we look at over 1,000 core production scenarios to identify exactly where AI can deliver tangible business results rather than just marginal gains. Once those high-value targets are set, the focus shifts to calibrating AI models with high-quality vertical data that is specific to the industry’s unique physics and logic. We ensure reliability in these critical operations by implementing a robust six-layer AI security framework. This multi-layered defense is essential because in an industrial setting, a data breach or a model error doesn’t just mean a software glitch—it can mean a physical shutdown or a safety hazard.

A significant hurdle in industrial intelligence is the shortage of cross-domain talent. How do initiatives like open-source communities and specialized academies bridge this gap, and what practical training methods are most effective for building an industry-ready workforce that can manage autonomous decision-making systems?

We cannot achieve industrial intelligence without a workforce that understands both the code and the concrete of the factory floor. To bridge this gap, we rely heavily on the CANN open-source communities and vertical industry communities on the cloud to foster collaborative problem-solving. Our ICT Academies and hands-on practice programs are designed to move beyond theoretical knowledge, putting real tools into the hands of engineers. By providing over 20 new certification courses, we aim to certify more than 1,000 partners, ensuring they have the specialized skills to manage autonomous systems. This practical, immersive training is the only way to transform traditional IT staff into a workforce that is truly “industry-ready” for the AI era.

Scalable AI requires moving beyond experimentation into embedded network agents for fault detection and optimization. How do cloud-based tools and new certification standards empower partners to build industry-specific agents, and what are the trade-offs when integrating these automated solutions into diverse sectors like power or manufacturing?

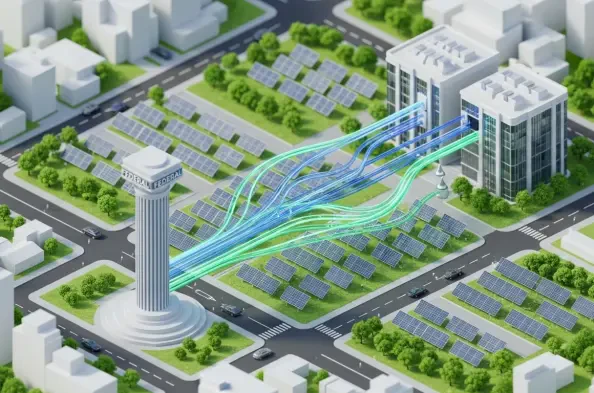

Scaling AI requires moving intelligence out of the data center and embedding it directly into the network products as agents that can detect faults and optimize performance in real-time. We empower our partners through tools like AgentArts on the cloud, which allows them to build customized agents tailored to specific sectoral needs, such as managing power grid fluctuations or manufacturing line speeds. The trade-off often involves balancing the speed of autonomous decision-making with the need for human-overwatch and rigorous certification standards. By deploying 3,000 scenario-specific experts across 38 industries, we help partners manage these trade-offs, ensuring that automation increases uptime without compromising the nuanced control required in complex sectors.

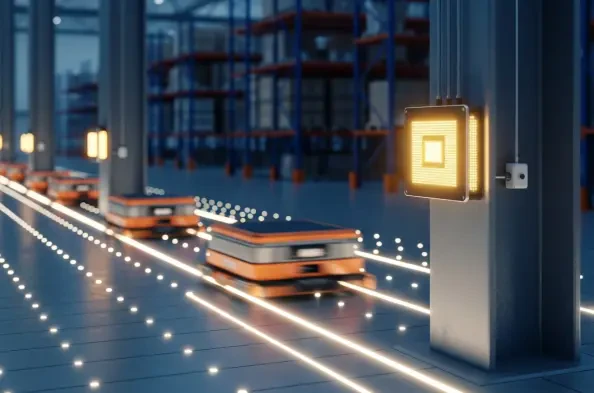

Industrial organizations are choosing between networks that simply consume data and infrastructure that senses and acts autonomously. In sectors like logistics or energy, how do digital twin implementations drive measurable gains in uptime, and what infrastructure requirements are necessary to move global showcases into standard operational practice?

The evolution from passive data consumption to autonomous sensing is best demonstrated through our 115 global industrial intelligence showcases, where digital twins play a starring role. In logistics and energy, these digital replicas allow us to simulate stressors and predict failures before they happen, leading to measurable gains in productivity and resource efficiency. To move these from “showcases” to standard practice, the underlying infrastructure must support real-time intelligence and seamless data flow across previously siloed systems. This requires a combination of intelligent infrastructure and cloud platforms that can handle the massive compute loads of digital twins while maintaining the low latency needed for “sense-and-act” operations.

What is your forecast for the future of Industrial All Intelligence?

I believe we are entering an era where the boundary between the digital model and the physical machine will virtually disappear, leading to a state of “All Intelligence” where every industrial asset is inherently smart. We will see a shift where AI is no longer an “add-on” feature but is baked into the very fabric of industrial hardware, with over 3,000 experts constantly refining these autonomous ecosystems. My forecast is that within the next few years, the standard for success will move from “how much data can we collect” to “how many autonomous decisions can our network safely execute.” This transformation will be driven by a highly specialized workforce and a partner ecosystem that prioritizes secure, vertical-specific AI over generic, one-size-fits-all solutions.