The current surge in corporate artificial intelligence integration is moving with a velocity that dwarfs every previous technological revolution, yet it simultaneously echoes the most chaotic periods of digital adoption. As 2026 progresses, many organizations find themselves caught in the middle of a phenomenon known as AI sprawl, where decentralized and fragmented implementations proliferate across business units faster than any centralized governance structure can reasonably manage. This trend is creating a sophisticated productivity trap: while individual departments may report immediate efficiency gains from localized AI tools, the enterprise as a whole faces mounting technical debt, operational fragmentation, and significant long-term financial burdens. The allure of quick wins has led to a landscape where innovation is happening in silos, resulting in a complex web of redundant systems that are increasingly difficult to maintain or integrate into a coherent corporate strategy.

Historical Cycles and the New Velocity

The Legacy of Shadow Data Systems

Looking back at the historical evolution of corporate technology, the current AI crisis bears a striking resemblance to the emergence of “shadow” data systems that plagued the finance and supply chain sectors a decade ago. In those instances, business teams frustrated by the slow pace of centralized IT departments took matters into their own hands by learning Structured Query Language and building independent databases to manage their reporting needs. For example, at major manufacturing firms like Tesla, finance teams developed their own internal workarounds to bypass disconnected accounting systems, creating a temporary fix for immediate data crises. While these independent systems solved localized problems, they eventually became a governance nightmare characterized by inconsistent data logic and siloed information that took years of expensive effort to reconcile. This pattern of execution outpacing governance is a recurring theme in enterprise technology, illustrating how the pursuit of immediate productivity often plants the seeds for future operational chaos.

Temporal Compression in the AI Age

The critical factor that distinguishes the current situation from past technological shifts is the extreme compression of time observed in modern deployment cycles. While the data crisis driven by independent SQL databases took several years to manifest as a systemic organizational problem, the transition from building manual databases to deploying autonomous AI agents is now unfolding over a matter of months. This acceleration is driven by the widespread availability of Large Language Model APIs and no-code platforms that allow non-technical staff to launch sophisticated automations with minimal oversight. Consequently, the scale of the resulting technical debt is exponentially higher than anything seen in previous decades. Organizations are no longer just dealing with disconnected spreadsheets; they are managing autonomous agents that make real-time decisions, meaning the potential for logic errors and conflicting outcomes is magnified across the entire enterprise architecture at a pace that traditional oversight committees simply cannot match.

The Risks of Fragmented Innovation

Identifying the Mechanics of AI Sprawl

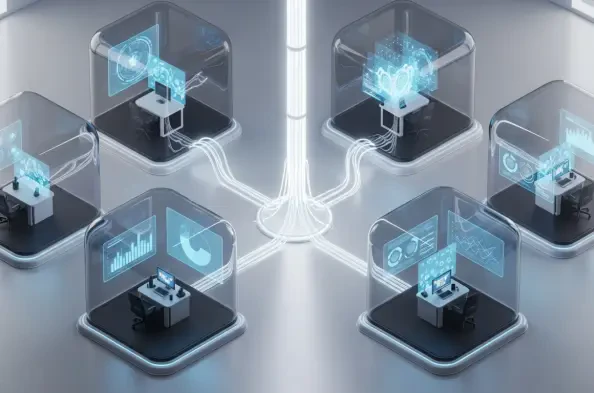

AI sprawl is fundamentally a state in which the financial and technical cost of deploying artificial intelligence tools drops significantly faster than the organization’s ability to oversee, secure, or sustain those same tools. This imbalance is fueled by the accessibility of cloud-native infrastructure and powerful pre-trained models that allow business units—ranging from underwriting and claims to marketing and customer service—to spin up isolated automations in a vacuum. When these business-led initiatives operate without central guidance, they create a productivity trap where localized efficiency gains come at the high price of a unified corporate strategy. Because these disparate tools cannot share data or logic, the company eventually suffers from “drifting decisions,” a state where different AI systems within the same enterprise produce conflicting or incompatible results based on fragmented data inputs, ultimately undermining the reliability of the entire organizational output.

Consequences of the GenAI Scramble

Current market observations reveal a widespread phenomenon often described as the “GenAI scramble,” where companies launch a flurry of redundant applications that overlap in function but lack any cohesive central oversight. In the insurance industry, for instance, it is not uncommon to find a single major carrier running more than a dozen different proof-of-concept projects across various lines of business, all attempting to solve identical problems like fraud detection or document intake. This lack of coordination leads to massive resource waste, as redundant software licenses are purchased and abandoned projects continue to incur cloud billing costs indefinitely because there is no central inventory of active AI assets. These “insidious” innovations often hide in plain sight, with business units moving quickly to solve immediate competitive pressures, and IT leadership only discovering the existence of these systems when they fail a regulatory audit or break during a critical cross-departmental workflow.

Structural Failures in Governance and Resource Allocation

Quantifying the Hidden Operational Toll

The financial and operational costs associated with AI sprawl are frequently buried deep within diverse budget lines, making them nearly impossible for executive leadership to quantify until the organization reaches a breaking point. These expenses manifest as a significant governance gap, where invisible or undocumented systems lead to severe legal risks and a total lack of accountability for biased or unexplainable AI outcomes. Furthermore, this fragmentation creates a maintenance trap that drains the organization’s innovative potential; current industry data suggests that some companies are spending over 60 percent of their total AI engineering capacity simply on keeping disconnected and redundant tools functioning rather than building new value. This environment not only stalls the overall progress of digital transformation but also leads to high talent attrition, as top-tier data scientists and engineers grow frustrated by the repetitive task of patching together a disorganized landscape of legacy workarounds instead of solving novel challenges.

The Limitations of Perimeter-Based Oversight

Traditional governance models are proving to be fundamentally inadequate for managing modern AI because they treat the technology as standard enterprise software with clear, manageable perimeters. While a majority of large organizations claim to be investing in AI oversight, only a small fraction have actually achieved a mature stage of management, primarily because of a fundamental “category mistake.” Leadership often attempts to govern AI as a point solution—like a CRM or a claims platform—rather than recognizing it as a foundational infrastructure similar to electricity or a core database. When companies rely on slow-moving committees and manual compliance checklists, they find themselves perpetually running behind the technology. By the time a traditional oversight board completes its review of a specific model, that model may have already been modified, retrained, or entirely superseded by a newer version, rendering the perimeter-based approach to governance effectively obsolete.

Establishing a Resilient Framework for Artificial Intelligence

Transitioning from Point Solutions to Infrastructure

To effectively escape the productivity trap of AI sprawl, leadership must shift its strategic focus from governing individual AI products to governing the underlying “grid” that supports them. This approach requires standardizing the shared foundations, data lineages, and security guardrails that every AI initiative must inherit by default, rather than attempting to review every single application in isolation. Just as a city does not govern electricity by inspecting every individual light bulb, a modern corporation must manage the infrastructure that powers its AI ecosystem. This transition involves moving away from the point-solution mindset that dominated the last two decades and toward a foundational strategy that prioritizes long-term scalability. By establishing a unified environment where every new project builds upon a shared core, organizations can ensure that their technological growth remains orderly, sustainable, and capable of delivering genuine value across the entire enterprise.

Strategies for Long-Term Scalability

The path forward for organizations involved moving toward a model where the focus shifted from reactive policy-making to proactive infrastructure development. Leaders identified the specific political and budgetary hurdles that prevented standardization in the past and addressed them by centralizing the core data definitions that all AI agents were required to use. This shift ensured that any new project launched within the company automatically inherited existing oversight mechanisms and data standards, rather than starting from a blank slate and waiting for a post-hoc review that might never come. By building a robust, shared AI highway, companies managed to scale the benefits of artificial intelligence without collapsing under the weight of uncoordinated and redundant innovations. The focus remained on creating a system where governance was built into the development tools themselves, allowing for rapid experimentation within a safe and integrated environment that protected the organization from the long-term risks of technological fragmentation.