Vernon Yai is a preeminent authority on data protection and enterprise architecture, renowned for his ability to dismantle the layers of “accidental complexity” that paralyze modern organizations. With a career spanning the evolution from early Assembler-based payroll systems to the current era of agentic AI, he has become a leading voice in the data-centric revolution, advocating for systems that prioritize transparency and structural elegance. His work focuses on shifting the enterprise mindset from managing sprawling, siloed applications to a unified, data-first governance model that mitigates risk through radical simplification.

In this conversation, we explore the critical transition points where simple tools become systemic liabilities, the psychological and financial hurdles that prevent organizations from pursuing elegant design, and the technical strategies required to prune a database schema from a million attributes down to its essential core.

How do you distinguish between a simplistic solution and an elegant one? When a system is underperforming, what specific indicators or metrics help determine if the design is merely oversimplified or truly refined? Please elaborate with a step-by-step breakdown of your evaluation process.

The distinction lies in which side of complexity the solution resides; a simplistic design is “on this side” of complexity, born from a superficial understanding of requirements, while elegance is found on the “far side,” having integrated diverse challenges into a unified, effective form. When evaluating a system, I first look at the “hack-to-feature” ratio—if every new requirement necessitates a workaround that circumvents the core architecture, you are dealing with a simplistic starting point that has been overwhelmed. My step-by-step process begins with a “categorical audit” where I check if a tool was bought for a label, like “inventory,” rather than its actual utility, followed by a cyclomatic complexity mapping to see how many logic branches exist. Finally, I measure the effort required for a standard change; in an elegant system, a modification might cost $10,000, whereas in a simplistic-turned-complex system, that same change can balloon to $100,000 due to brittle code. It is an emotional realization for many teams when they see that their “simple” fix is actually a mounting debt of 40-button remotes that no one knows how to operate.

Large-scale spreadsheets often reach a breaking point where errors become systemic and dangerous. Given that a vast majority of enterprise sheets contain serious mistakes, what is the best process for auditing these tools and deciding when to migrate to a formal system? Please include a relevant anecdote.

The auditing process must start with a cold acceptance of the statistics: 88% of enterprise spreadsheets contain errors, and up to 30% of those are “serious,” meaning they actively misguide business decisions. To audit these, you must trace the “Darwinian evolution” of the file—if a sheet created for one analyst’s desk is now being used to run a multi-million dollar department, it has reached its breaking point. I once encountered an architectural firm where a single Excel cell contained a formula that filled an entire printed page, featuring hundreds of nested conditionals. The analyst was literally penciling in parentheses on a piece of paper to try and debug it, which is the ultimate red flag that your “tool” has become a liability. When the cost and risk of making a single change become orders of magnitude higher than the original build, you must migrate to a data-centric system that treats logic and data as distinct, governed entities rather than a tangled web of hard-coded constants.

Leaders often face the choice between costly incremental patches and a total system overhaul. How do the long-term financial trade-offs compare between these two paths, and what are the specific risks of choosing the “incremental” route? Please provide a detailed response with at least four sentences.

The financial trade-off is often a choice between a visible, immediate cost and a hidden, compounding tax that eventually bankrupts operational agility. Incremental patches are chosen because the “burn it all down” approach is viewed as too risky and expensive, yet this path leads to a “complexity embrace” where systems become 1,000 times more complex than an elegant replacement. The primary risk of the incremental route is that it creates a system of systems where any small change can have catastrophic downstream effects, requiring thousands of lines of code to support a single new data attribute. Over time, the organization pays for an overhaul ten times over through maintenance and “integration spaghetti” without ever actually receiving the benefits of a modern architecture. This creates a legacy environment where the sheer weight of the technical debt makes the enterprise a sitting duck for more agile competitors who aren’t tethered to 1990s-era patches.

In many organizations, individuals build careers by mastering highly complex, proprietary subsystems. How does this specialized knowledge create resistance to simplification, and what strategies can shift a corporate culture toward valuing transparency over “gatekept” technical expertise? Please share your thoughts and any observed metrics.

Mastering complexity provides a sense of job security—it’s a “superpower” that allows an expert to navigate a labyrinth no one else understands, such as the intricacies of an SAP product classification subsystem. This creates a natural resistance to simplification because if the system becomes transparent and elegant, the “gatekeeper’s” unique value proposition vanishes, leveling the playing field for others. To shift this culture, leadership must reward “complexity reduction” as a primary KPI, moving away from honoring those who can fix the mess to honoring those who prevent it. We often see that when an organization adopts a data-centric model, they can reduce their managed attributes from one million to just one thousand, which fundamentally changes the role of the architect from a “firefighter” to a “designer.” Transparency must be framed not as a threat to expertise, but as a liberation from the mundane task of managing brittle, inscrutable code.

Modern enterprises often manage a million data attributes when a thousand might actually suffice. What are the primary technical hurdles in reducing a database schema to its essential elements, and how does this reduction impact the overall volume of code and maintenance costs? Please explain in detail.

The primary technical hurdle is that our current systems are application-centric, meaning every new piece of software brings its own unique, redundant schema that must be integrated via complex APIs and ETL processes. To reduce a million attributes to a thousand, you have to strip away the “synthetic complexity” created by different vendors using different names for the same concepts, like “customer” versus “client.” This reduction has a massive impact on the codebase because the volume of code is driven by the complexity of the schema; adding one column can trigger the need for thousands of lines of validation, UI, and testing code. By dropping the schema complexity by half, you effectively cut the maintenance costs and bug potential in half, as there is less “shuffling” of data between disparate layers. Achieving a three-order-of-magnitude reduction in attributes doesn’t just make the system faster; it makes it humanly understandable, which is the only way to maintain agency over an enterprise’s information landscape.

What is your forecast for the data-centric revolution?

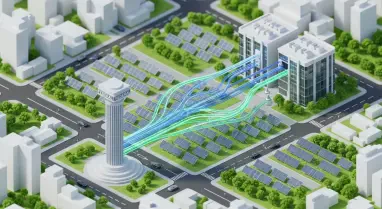

I believe we are approaching a tipping point where the sheer weight of “application-centric” debt will force a radical shift toward data-centricity, simply because the current model is no longer sustainable for human—or even AI—management. In the coming years, we will see a move away from buying monolithic “solutions” that come with their own pre-packaged schemas, and instead, enterprises will focus on building a singular, elegant data core that multiple “disposable” applications can plug into. This will lead to a 90% reduction in the direct labor associated with system integration, much like Henry Ford’s assembly line revolutionized automotive production by looking at the process through a different lens. If we fail to embrace this elegance, we risk abdicating our control to inscrutable systems that not even their creators can explain, so the revolution isn’t just a technical preference—it is a necessity for maintaining organizational intelligence.