Vernon Yai is a distinguished authority on data protection and regulatory strategy, known for his deep expertise in navigating the complex intersection of privacy law and emerging technologies. With a career dedicated to developing robust risk management frameworks, he has become a pivotal voice for organizations seeking to balance aggressive innovation with ethical data governance. As the landscape of artificial intelligence shifts toward a more centralized federal oversight model following the White House’s 2026 policy framework, Yai’s insights offer a crucial roadmap for understanding how these new mandates will reshape the digital economy.

The following discussion explores the strategic implications of a unified national AI policy, focusing on the tension between federal preemption and state-level protections. It covers the evolving role of the judiciary in settling copyright disputes for model training, the logistical hurdles of accelerating infrastructure development, and the technical challenges of integrating rigorous child privacy safeguards. Furthermore, the conversation delves into the legal risks of AI-driven workforce changes and provides a high-level outlook on how these regulatory shifts will define American technological leadership in the coming years.

A national framework aims to preempt conflicting state AI laws while preserving local zoning and consumer protection powers. How should companies navigate these overlapping jurisdictions, and what operational challenges arise when shifting from a patchwork of state rules to a unified federal standard? Please share specific examples.

Navigating this transition requires a dual-track strategy where companies must align with federal standards while maintaining a granular focus on local compliance. The 2026 framework essentially serves as a “wish list” for Congress, attempting to stop the fragmented approach we’ve seen where a political action committee recently spent millions opposing local candidates specifically over AI oversight issues. Operationally, the shift from a patchwork of state rules to a federal baseline is intended to reduce the burden on innovation, yet developers still have to respect a state’s traditional police powers. For instance, while federal law might govern the overarching model safety, a company still has to navigate local zoning authorities when deciding where to place physical AI infrastructure like data centers. This means a firm might find its technical operations streamlined at a national level, only to face a complete standstill if they haven’t accounted for a specific state’s mandate to protect seniors or prevent fraud. It is a delicate balance of scaling rapidly while remaining rooted in the specific consumer protection expectations of each jurisdiction.

With the judiciary tasked with resolving copyright infringement and model training disputes, what benchmarks should legal teams use to assess intellectual property risk? How do court-led settlements influence your long-term investment in proprietary data versus public datasets? Please detail the step-by-step process for evaluating these risks.

Legal teams must pivot from a purely defensive posture to one of active judicial monitoring, as the administration has clearly designated the courts as the lead for copyright issues. The first step in evaluating these risks is to audit all training data to determine the ratio of copyrighted material versus public or licensed datasets, especially since court settlements will now provide the primary guidance for use. Organizations should then establish a “settlement barometer” to track ongoing litigation and trials, using the outcomes to adjust their risk tolerance for different types of model training. This environment makes proprietary data far more valuable because it offers a “safe harbor” from the unpredictable nature of court-led IP rulings that are currently in flux. By formalizing a process that weighs the cost of potential litigation against the performance gains of specific datasets, firms can make more informed decisions about whether to invest in original content or risk the legal fallout of scraping public sources. Ultimately, the long-term investment strategy will likely favor high-quality, licensed data as the legal costs of defending model training on unverified public data begin to mount.

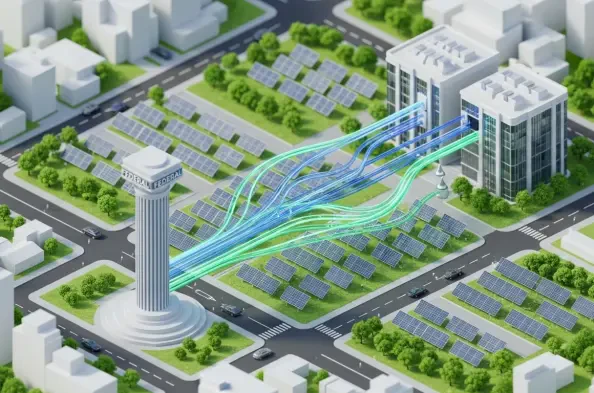

Streamlining federal permitting is intended to accelerate the rollout of physical AI infrastructure. What environmental or logistical trade-offs might occur during this rapid expansion, and what practical steps can developers take to ensure projects remain compliant with local zoning authorities? Please elaborate with relevant anecdotes or metrics.

The push to streamline federal permitting is a double-edged sword that creates a high-speed lane for infrastructure while potentially crashing into local environmental and logistical sensitivities. While the federal framework seeks to unleash the full potential of AI by cutting red tape, it explicitly leaves zoning authority over the placement of hardware to the states. This creates a scenario where a project might receive a federal green light in record time, only to be blocked by local ordinances designed to manage noise, power consumption, or land use. To mitigate this, developers should engage in “pre-emptive zoning alignment,” which involves conducting environmental impact assessments that meet both federal speed requirements and local sustainability metrics simultaneously. We are seeing a significant tension between the need for rapid expansion and the desire to protect the local character of communities, making it essential for developers to present their infrastructure as a local utility rather than just a remote processing hub. Practical success here is measured by the speed of the “last mile” of approval, ensuring that local authorities don’t view the streamlined federal process as an infringement on their community’s safety and aesthetics.

Proposed policies aim to extend child privacy protections to AI systems and limit targeted advertising. How can platforms technically implement these safeguards without compromising the user experience, and what metrics are most effective for measuring the success of these privacy-first initiatives? Please provide a detailed explanation.

Implementing these safeguards requires a “privacy-by-design” architecture that can distinguish between general users and protected groups, such as children and senior citizens, without creating friction in the interface. Under the proposed Trump America AI Act, the technical challenge lies in limiting data collection and targeted advertising within AI chatbots and companion services while maintaining the system’s personalization. Platforms can achieve this by using on-device processing for sensitive demographics, ensuring that data used for AI interaction never reaches a central server for ad-targeting purposes. Success is not just measured by the absence of data leaks, but by “consent conversion rates” and “ad-relevance scores” that prove a platform can still provide value without invasive tracking. By building these protections directly into the AI’s core logic, companies can fulfill the mandate of protecting American families while avoiding the “overly burdensome” regulatory traps that often stifle smaller tech players. The goal is to reach a state where the AI is smart enough to be helpful without needing to be intrusive, essentially creating a firewall between the user’s personal identity and the model’s predictive capabilities.

There are moves to require public reporting on AI-driven layoffs and to allow private lawsuits against developers for system-related harms. How should organizations structure their internal audits to mitigate these legal risks, and what strategies effectively manage the workforce transitions being tracked? Please describe the necessary procedures.

To manage these emerging legal risks, organizations must institutionalize a rigorous internal auditing procedure that tracks the lifecycle of every AI deployment from inception to its impact on the payroll. This starts with creating a “Workforce Impact Statement” for every new AI system, documenting exactly which roles are being supplemented or replaced, which directly addresses the reporting requirements in the draft Trump America AI Act. Organizations should also prepare for the possibility of private actors filing lawsuits for system-related harms by establishing a “Harm Mitigation Fund” and a dedicated response team to address platform failures in real-time. Strategically, workforce transitions should be managed through “reskilling pipelines” that are transparently reported to federal agencies, demonstrating a proactive approach to social responsibility rather than a reactive one. By treating AI-driven layoffs as a public reporting metric, companies are forced to become more disciplined in their deployment strategies, ensuring that the efficiency gains of AI do not become a long-term liability in the form of litigation or reputational damage.

What is your forecast for the American AI landscape?

I anticipate a period of intense consolidation where the winners are those who can navigate the “little something for everyone” approach of the current national framework while anticipating even stricter federal requirements. The U.S. will likely cement its position as a global leader by focusing on massive infrastructure projects and high-performance computing, but this will be tempered by a wave of judicial rulings that will redefine how we view digital ownership. We are moving toward a reality where “AI compliance” is no longer a niche legal department function but a core business metric that determines a company’s ability to attract investment and avoid the crosshairs of both federal and state attorneys general. Ultimately, if Congress acts on this framework across the aisle, we will see a landscape that is far more predictable for businesses but significantly more scrutinized by the public, creating a high-stakes environment where safety and innovation must coexist to survive.