The transition from classical bit-based logic to the fluid mechanics of quantum processing has moved beyond theoretical physics into a complex era defined by massive infrastructure investments and competing hardware modalities. While popular discourse often simplifies quantum computing into a singular looming breakthrough, the industry currently functions as a fragmented ecosystem where various physical implementations of the qubit compete for dominance. This technological race is not merely about achieving higher speeds but about mastering the behavior of subatomic particles to perform calculations that were once considered mathematically impossible. At its core, a quantum computer utilizes the specific principles of superposition, entanglement, and interference to navigate a computational landscape that classical binary systems cannot replicate, even with the aid of the most powerful supercomputers currently in operation.

Modern quantum hardware strategies are predominantly characterized by two critical dimensions: the pursuit of system reliability and the selection of a viable physical architecture. The industry is presently navigating the “Noisy Intermediate-Scale Quantum” (NISQ) era, a period where systems are powerful enough to demonstrate specialized computational capabilities but remain highly susceptible to environmental interference. The ultimate objective is to bridge the gap to “Fault-Tolerant Quantum Computing” (FTQC), a stage where errors can be corrected in real-time, allowing for prolonged and complex operations. This journey involves a high-stakes competition between diverse material foundations, ranging from man-made superconducting circuits and trapped ions to neutral atoms and particles of light, each offering a unique set of advantages and engineering hurdles that must be overcome to reach commercial maturity.

The fundamental unit of this technology, the qubit, differs from a classical bit by its ability to exist in a state of superposition. To visualize this, industry experts often employ the analogy of a spinning coin; while a classical bit is either heads or tails, a qubit represents the coin while it is still in motion, embodying a vast landscape of possibilities simultaneously. This fluid state allows the hardware to hold an immense amount of data in a mathematical suspension before a final result is measured. However, the true utility of the qubit emerges through entanglement and interference. Entanglement creates a profound link between qubits so that the state of one is inextricably tied to another, regardless of their physical distance, allowing the entire processor to behave as a single, cohesive computational unit. Meanwhile, quantum interference is used to manipulate probability amplitudes, effectively amplifying the signals of correct answers while suppressing the “noise” of incorrect ones, ensuring that the system converges on the desired solution with high probability.

Navigating the Spectrum of System Reliability

Part 1: The Challenges of the NISQ Era

The primary obstacle hindering the immediate widespread adoption of quantum systems is the extreme fragility of quantum states, a phenomenon that continues to plague even the most advanced laboratories. Qubits are exceptionally sensitive to their surroundings, reacting to the slightest thermal fluctuations, electromagnetic interference, or even the microscopic imperfections within the materials used to construct the processor itself. This sensitivity leads to decoherence, a process where the qubit loses its quantum properties and prematurely reverts to a classical state, effectively ending the computation before a result can be reached. In our current NISQ era, while manufacturers have succeeded in scaling systems to include hundreds of qubits, the high rate of decoherence means that these devices can only run relatively short programs before the data becomes corrupted by environmental noise.

As a quantum algorithm executes, these errors do not remain isolated; they accumulate and propagate through the circuit, eventually making the final output indistinguishable from random data. Leading developers like IBM and Google have introduced processors featuring hundreds or even thousands of physical qubits, yet these figures can be highly deceptive for those unfamiliar with the underlying physics. A high qubit count does not necessarily translate to superior performance if the gate fidelity—the accuracy of the operations—remains below the threshold required for sustained calculation. While niche milestones such as “quantum supremacy” have demonstrated that NISQ devices can outperform classical machines in highly specific, artificial tasks, the transition to broad commercial utility is still restricted by this inherent noise, forcing researchers to focus on specialized “noise-aware” algorithms that can deliver value despite the hardware’s limitations.

The volatility of the NISQ environment has also led to a significant focus on benchmarking and transparency within the hardware community. It is no longer enough to simply claim a certain number of qubits; the industry has shifted toward metrics like Quantum Volume or Algorithmic Qubits, which account for both scale and error rates. These benchmarks provide a more realistic picture of a system’s capability, highlighting the fact that a perfectly functioning 50-qubit machine can often outperform a poorly calibrated 1,000-qubit system. This realization has driven a more disciplined approach to hardware development, where the emphasis is placed on reducing the “error per gate” through improved material science and more precise control electronics, ensuring that the qubits we do have are as stable and functional as possible before further scaling is attempted.

Part 2: The Engineering Path to Fault Tolerance

The industry’s ultimate objective remains the achievement of fault-tolerant quantum computing, a state where a system can identify and rectify its own internal errors during the calculation process. Reaching this milestone would allow for deep, complex algorithms to run for days or even weeks without failure, unlocking the full potential of quantum mechanics for drug discovery, material science, and cryptography. However, the engineering requirements for fault tolerance are staggering. To create a single “logical qubit”—a virtual qubit that is effectively error-free—a hardware platform may require hundreds or even thousands of physical qubits to act as a collective buffer. This redundancy ensures that if a few physical qubits undergo decoherence, the overall information remains intact within the larger ensemble, preserved by the laws of quantum error correction codes.

Beyond the sheer scale of hardware required for redundancy, the system must also reach a critical “error threshold” where the correction process itself does not introduce more noise than it eliminates. This is a delicate balancing act; if the intrinsic error rate of the hardware is too high, the act of checking for errors consumes all the available computational resources, leaving nothing for the actual task at hand. Furthermore, there is a massive “classical overhead” associated with managing a fault-tolerant processor. The decoding of quantum errors must happen at incredible speeds, requiring a high-performance classical computing infrastructure to sit alongside the quantum core, monitoring its health and applying corrections in real-time. Bridging the gap between the currently noisy hardware and this vision of reliable, self-correcting computation represents the most significant technical challenge for the remainder of this decade.

As this transition progresses, the focus is increasingly shifting toward the development of specialized “middleware” and error-suppression techniques that can bridge the gap between NISQ and FTQC. These techniques involve using classical software to post-process quantum results or employing “dynamical decoupling” to shield qubits from noise through rapid, repetitive pulses. By squeezing every bit of performance out of current hardware, researchers are effectively practicing the error-correction protocols that will eventually become standard in fully fault-tolerant systems. This evolutionary approach allows the industry to move forward incrementally, proving out the theories of error correction on a small scale before attempting to build the massive, multi-thousand-qubit arrays that will be necessary for a truly reliable quantum computer.

Diverse Physical Architectures and Modalities

Architecture 1: Superconducting Circuits and Trapped Ions

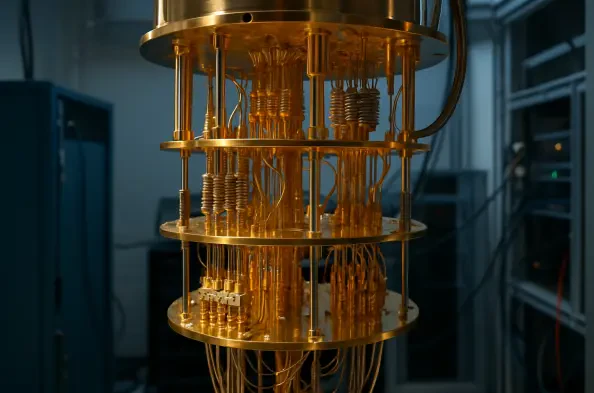

Superconducting circuits currently represent the most mature and widely adopted hardware architecture, championed by established technology leaders like IBM and Google. These systems utilize tiny electrical circuits fabricated from superconducting materials that, when cooled to temperatures near absolute zero, exhibit the collective quantum behavior necessary to form qubits. The primary advantage of this modality is its reliance on existing semiconductor manufacturing techniques, which allows for relatively rapid prototyping and scaling. Moreover, superconducting qubits offer very fast gate operation speeds, enabling them to perform many calculations within their limited coherence windows. However, these systems face a significant “plumbing” challenge; they require massive dilution refrigerators to maintain their operating temperature, and the sheer volume of coaxial wiring needed to control thousands of individual qubits inside a freezer creates a daunting logistical hurdle.

In sharp contrast to the man-made nature of superconducting circuits, trapped ion systems utilize individual charged atoms as their fundamental building blocks. Companies like IonQ and Quantinuum suspend these ions in a vacuum using precisely controlled electromagnetic fields and manipulate them using ultraviolet lasers. Because these are “nature’s qubits,” every ion of a given isotope is perfectly identical, eliminating the manufacturing defects and variability that can plague superconducting chips. Trapped ions are renowned for having the longest coherence times in the industry, meaning they can stay in a quantum state for much longer than their superconducting counterparts. Despite this stability, they suffer from significantly slower operation speeds and face extreme difficulties in scaling the electromagnetic traps to hold the thousands of ions required for complex, modern applications.

The competition between these two modalities has created a productive tension that drives innovation across the entire sector. Superconducting developers are working feverishly to integrate control electronics directly into the cryogenic environment to reduce the wiring bottleneck, while trapped-ion researchers are exploring modular architectures where small traps are interconnected via photonic links. This modularity could eventually allow ion systems to overcome their scaling limitations by creating a network of smaller processors that work together as a single unit. Meanwhile, the fast gate speeds of superconductors continue to make them the preferred choice for applications requiring rapid-fire calculations, even if they currently require more frequent error correction. The divergence in these two paths highlights a fundamental trade-off in quantum hardware: the choice between the speed of man-made circuits and the pristine stability of natural atoms.

Architecture 2: Photonic Systems and Neutral Atoms

Photonic quantum computing takes a fundamentally different approach by using particles of light as qubits, moving them through complex optical circuits consisting of beam splitters, mirrors, and phase shifters. Companies like PsiQuantum and Xanadu are pursuing this path because photons are naturally resistant to the environmental noise that affects other modalities. Unlike superconductors or ions, photonic systems do not require extreme cryogenic cooling to maintain the quantum state of the qubit, which potentially allows for a more compact and energy-efficient hardware footprint. Additionally, because photons are the primary medium for modern telecommunications, photonic quantum computers are natively compatible with fiber-optic networks, making them the ideal foundation for a future “quantum internet” where sensitive data is transmitted with quantum-level security across vast distances.

Despite these advantages, photonic hardware faces a unique set of challenges, primarily centered on the fact that photons do not easily interact with one another. Since quantum logic gates require qubits to influence each other’s states, developers must use complex “measurement-based” approaches or probabilistic methods to facilitate these interactions. This often results in a high resource overhead, where a massive number of auxiliary photons and components are needed just to perform a single reliable gate operation. Nevertheless, the ability to manufacture these optical components using standard silicon photonics found in the telecommunications industry offers a clear path toward mass production. As the technology matures, the focus is on improving the efficiency of single-photon sources and detectors, which are critical for reducing the loss of information as light travels through the system.

Neutral atom technology has emerged as a powerful middle ground, utilizing “optical tweezers”—focused beams of laser light—to hold and manipulate individual atoms in a vacuum. Champions of this modality, such as Pasqal and QuEra, can arrange these atoms in dense two-dimensional or three-dimensional arrays, offering a level of scalability that is difficult to achieve with trapped ions or superconducting loops. Because neutral atoms do not repel each other like charged ions do, they can be packed much more tightly, allowing for thousands of qubits to be controlled within a relatively small space. While they do not require the massive dilution refrigerators of superconducting systems, they still demand extreme precision in laser control to manipulate thousands of individual beams simultaneously. This modality’s flexibility in rearranging qubits “on the fly” makes it particularly well-suited for simulating complex physical systems and solving optimization problems that map naturally onto atom-grid layouts.

Common Themes and the Future Market

Strategy 1: Scalability and Hybrid Integration

Every quantum hardware platform, regardless of its underlying physics, currently confronts a fundamental “scalability paradox” where increasing the number of qubits almost inevitably leads to an increase in noise and system complexity. The central engineering goal for the industry is to find a way to scale quantity without sacrificing the quality, or fidelity, of the qubits. This has led to a shift away from focusing purely on qubit counts toward a more holistic view of system performance. There is a growing consensus among developers that hardware cannot succeed in a vacuum; it requires “full-stack” integration where the control electronics, cryogenic systems, and software compilers are all optimized for the specific quirks and connectivity of the underlying quantum processor. This integrated approach ensures that the limited quantum resources are used as efficiently as possible, maximizing the utility of every single qubit.

Furthermore, the industry is increasingly embracing a hybrid model where quantum computers do not replace classical systems but rather act as specialized accelerators for specific, high-complexity tasks. In this framework, a classical supercomputer handles the vast majority of data processing, storage, and logic, offloading only the most mathematically intensive subroutines—such as simulating a molecular bond or optimizing a global logistics network—to the quantum processor. This collaborative approach is designed to achieve “quantum advantage,” the point at which a quantum-enhanced workflow provides a tangible, cost-effective benefit over a purely classical one. By focusing on hybrid integration, hardware providers can deliver real-world value today, even before fully fault-tolerant systems are available, by identifying the specific parts of a problem that are most susceptible to quantum acceleration.

The development of these hybrid systems has also spurred a new wave of software innovation aimed at managing the hand-off between classical and quantum environments. Orchestration layers now exist to translate high-level algorithmic instructions into the low-level microwave or laser pulses required by the hardware, while also handling the return of data for classical post-processing. This software-defined approach to hardware management allows users to experiment with different quantum modalities through the cloud, comparing the performance of a superconducting chip against a trapped-ion system for the same specific problem. As these hybrid workflows become more sophisticated, the boundary between classical and quantum computing will continue to blur, leading to a unified high-performance computing environment where the underlying hardware architecture is abstracted away from the end-user.

Strategy 2: Market Consolidation and Standardization

The quantum hardware landscape is currently experiencing a period of intense experimentation reminiscent of the early days of classical computing, but historical precedents suggest an eventual consolidation around a few dominant standards. Just as the silicon-based transistor eventually outcompeted vacuum tubes and other alternatives due to its reliability and ease of mass production, the quantum industry will likely favor the architecture that proves most manufacturable at scale. However, because the different modalities offer such distinct strengths—such as the speed of superconductors versus the connectivity of neutral atoms—it is possible that the market will remain diversified for longer than many expect. Infrastructure players like Q-CTRL and various cloud providers are staying “modality agnostic” for now, developing diagnostic tools and interfaces that work across all hardware types to ensure they are prepared for whichever technology ultimately takes the lead.

This period of competition is also shifting the industry’s focus from scientific milestones to commercial viability and industrial reliability. Companies are no longer just looking for a “quantum supremacy” proof of concept; they are looking for “uptime,” “repeatability,” and “service level agreements.” For a quantum hardware provider to succeed in the mid-term, they must demonstrate that their system can be integrated into a standard data center environment and operated with minimal manual intervention. This push for industrialization is driving advancements in modular hardware design, automated calibration routines, and standardized component manufacturing. As these systems become more robust, the conversation is moving away from the exotic physics of the qubit and toward the practicalities of power consumption, cooling requirements, and total cost of ownership, which are the true drivers of corporate adoption.

Ultimately, the standardization of quantum hardware will be dictated by the specific needs of the most lucrative applications. If the primary driver of quantum value turns out to be long-distance secure communication, photonic systems will likely dominate the infrastructure. If the value lies in high-speed financial modeling or real-time optimization, the fast gate speeds of superconductors may provide the winning edge. By maintaining a diverse range of hardware pathways, the industry ensures that it is not betting on a single technology that might hit an insurmountable physics wall. This multi-path approach has created a resilient ecosystem where a breakthrough in error correction for one modality often provides insights that can be applied to others, accelerating the progress of the entire field toward the era of reliable, high-performance quantum computing.

The Strategic Imperative

The progress made in quantum hardware since the early stages of the decade demonstrated that the path to a quantum-enabled society was never going to be a simple, linear progression. Instead, it was a multi-faceted engineering challenge that required simultaneous advancements in material science, cryogenic engineering, and classical control systems. The industry successfully moved past the purely theoretical phase and established a robust, competitive market where diverse architectures proved their worth in specialized applications. This period of intense development highlighted that while the “perfect” qubit remained elusive, the combination of hardware-aware software and hybrid classical-quantum workflows provided a viable bridge to the future. The transition from noisy systems to more stable platforms was not achieved through a single discovery, but through the cumulative effect of thousands of incremental improvements across the entire technological stack.

Looking ahead, organizations must move beyond the role of passive observers and begin integrating quantum readiness into their long-term strategic planning. The focus should shift toward identifying specific high-value problems within their domains that are most likely to benefit from quantum acceleration as hardware continues to mature. This involves developing internal expertise to navigate the various modalities and building the necessary data pipelines to support hybrid classical-quantum operations. The winning entities in this new landscape were those that recognized early on that the value of quantum computing lay not in the hardware itself, but in the unique competitive advantages it provided when applied to the most complex challenges of the modern world. As the technology moved toward standardization, the foundation for a new era of computational capability was firmly established, setting the stage for the next generation of industrial and scientific breakthroughs.